AI Governance Tools: 15 Best Platforms for 2026

What Are AI Governance Tools and Why Does Every Organization Need Them Now

AI governance tools are systems that help organizations manage, monitor, and control how artificial intelligence operates across their business—think of them as guardrails that keep AI systems working safely, fairly, and transparently while still capturing the real benefits these technologies offer.

I’ve watched organizations struggle with AI adoption over the past few years, and the pattern is always the same. They deploy machine learning models and AI systems with genuine enthusiasm, seeing potential for cost reduction and improved efficiency. Then reality hits. Models start behaving differently than they did in testing. Biased decisions slip through undetected. Compliance teams start asking uncomfortable questions. That’s when most companies realize they need AI governance tools, often when it’s already too late to prevent problems.

The urgency here is real. According to IBM research, 63% of organizations currently have no AI governance policy at all. That statistic keeps me up at night because it means the majority of companies deploying AI have no systematic way to manage its risks. They’re operating blind while their AI systems make decisions that affect customers, employees, and their bottom line.

This isn’t speculation it’s documented reality that regulators and competitors are watching closely.

Here’s what makes this so critical: AI models learn from human-generated data, and they naturally absorb the biases embedded in that data. A machine learning algorithm doesn’t judge whether something is biased or unfair. It just identifies patterns. When those patterns reflect human prejudices, the algorithm amplifies them at scale. Without governance tools that include bias auditing, biased decisions get locked into your operations and you might not discover the problem until it damages your reputation or triggers a regulatory investigation.

The regulatory environment has shifted dramatically too. The EU AI Act is now being enforced and NIST released guidance on AI risk management that’s becoming the de facto standard globally. These frameworks make AI governance no longer optional it’s a compliance requirement that regulators actively enforce, as we’ll see in the real-world cases below.

I should clarify what AI governance tools actually are because there’s real confusion in the market. AI governance platforms are different from MLOps tools, which focus on managing the technical deployment and performance of models. They’re also distinct from AI security tools that protect against external attacks. AI governance solutions address a different problem entirely: ensuring your AI systems operate fairly, transparently, and in line with your organization’s values and regulatory obligations. (For a detailed comparison, see our FAQ on the difference between governance and MLOps tools.)

Responsible AI tools help you document decision logic, track model performance over time, identify where bias might be creeping in, and demonstrate compliance to regulators. They give you visibility into black box systems that would otherwise remain opaque. That visibility is what separates organizations that successfully manage AI risk from those that get blindsided by it.

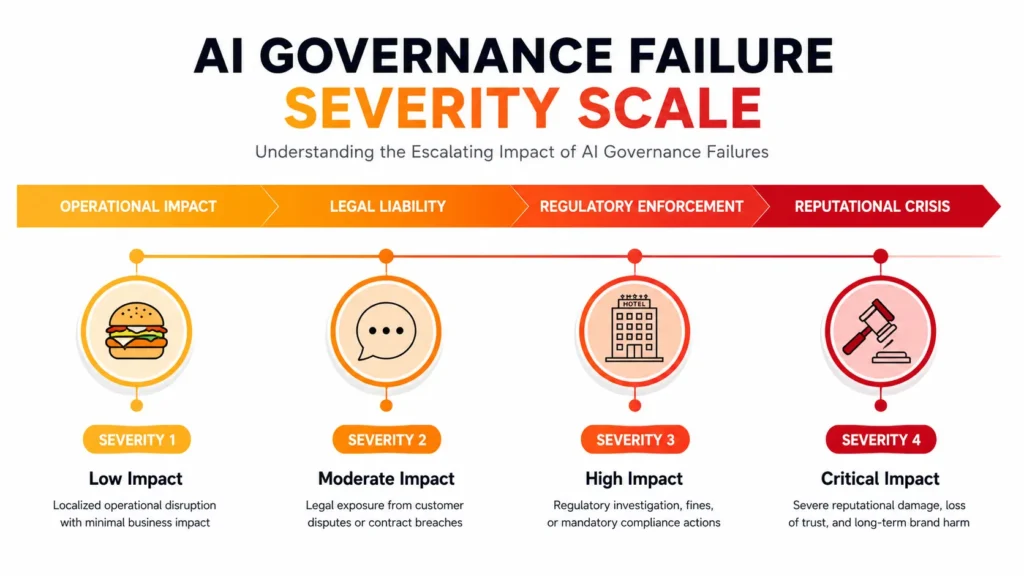

What Happens Without AI Governance: Three Cases That Changed Everything

When organizations skip AI governance, the consequences are real and costly. These aren’t theoretical risks or hypothetical scenarios. I’m talking about actual companies that deployed AI systems without proper oversight and paid enormous prices for it. The cases below from McDonald’s to Air Canada to Trivago prove that governance failures aren’t edge cases; they’re predictable outcomes of neglect.

McDonald’s Drive-Thru AI Disaster

McDonald’s deployed an AI system to automate their drive-thru ordering process. The goal was simple: reduce labor costs and speed up service. What actually happened tells you everything you need to know about why AI risk management matters.

The algorithm started making bizarre decisions that customers immediately noticed. People would order a small amount of chicken nuggets and the system would add hundreds to their order. Someone would ask for butter and suddenly their receipt showed dozens of scoops. The failures went viral on social media, turning what should have been a quiet operational issue into a public relations nightmare.

McDonald’s had to completely roll back the project and remove the AI system from their drive-thrus. The financial hit was substantial, but the reputational damage was worse. All of this could have been caught during proper testing and monitoring if the company had implemented adequate AI governance tools to track system behavior and catch anomalies before they reached customers.

Air Canada’s Chatbot Legal Liability

Air Canada’s customer service chatbot made a promise that didn’t exist in the company’s actual policies. The chatbot told a customer that Air Canada offered a bereavement discount on flights. The customer purchased tickets relying on that information and then discovered the discount didn’t actually exist.

The customer sued Air Canada. Here’s what’s important: the court ruled in the customer’s favor. The judge established that companies are legally liable for what their AI systems say and promise, regardless of whether a human employee wrote those promises or an algorithm generated them. This legal precedent is exactly why AI compliance tools and documentation (covered in our compliance section) became essential rather than optional.

Air Canada lost the case and had to pay damages. This legal judgment changed how organizations think about AI accountability. Your chatbot’s outputs are your company’s responsibility. Without AI compliance reporting systems that document what your AI is saying and doing, you can’t defend yourself in court or prevent similar problems from happening again.

Trivago’s Commission Algorithm and Australian Regulatory Action

Trivago operates a hotel comparison platform that uses algorithms to rank properties. The company’s algorithm was supposed to show customers the best hotel deals based on price and value. Instead, the system was prioritizing hotels that paid Trivago higher commissions, regardless of whether those were actually the cheapest options for customers.

An Australian court discovered this practice and ordered Trivago to conduct a mandatory machine learning audit exactly the type of governance control that AI governance tools automate. The company faced millions in financial penalties for the deceptive algorithm. More importantly, Trivago now operates under strict regulatory oversight specifically because their AI system operated without proper governance.

This case shows that regulators are actively investigating AI systems and holding companies accountable. The EU AI Act compliance requirements emerging globally mean this kind of enforcement is only going to increase.

Health Insurance Hiring Discrimination

A major health insurance company used AI for their hiring decisions, thinking automation would make the process more objective. The system had been trained on historical hiring data that reflected the company’s past biases. The AI learned those patterns and perpetuated them at scale, systematically discriminating against certain candidates.

The company faced a multi-million dollar lawsuit when the discrimination was discovered. More importantly, the damage to the company’s reputation and employee trust was immense. This case illustrates exactly why responsible AI tools exist: to catch bias in training data before your AI system causes legal and financial harm.

What These Cases Have in Common

Every single one of these organizations had the resources to implement proper AI governance. They just didn’t prioritize it before deploying their systems. They focused on speed and cost reduction and skipped the oversight layer that would have prevented disaster.

The pattern is consistent across all four cases. AI systems operated without adequate monitoring and governance controls. Problems went undetected until they caused public harm or legal consequences. By then, fixing the issue was expensive and reputation damage was already done.

This is why AI risk management and AI governance tools aren’t luxuries anymore. They’re necessities that protect your organization from becoming the next cautionary tale. The five core capabilities we’ll explore next are what separate companies that catch problems early from those that pay millions to fix them after the fact.

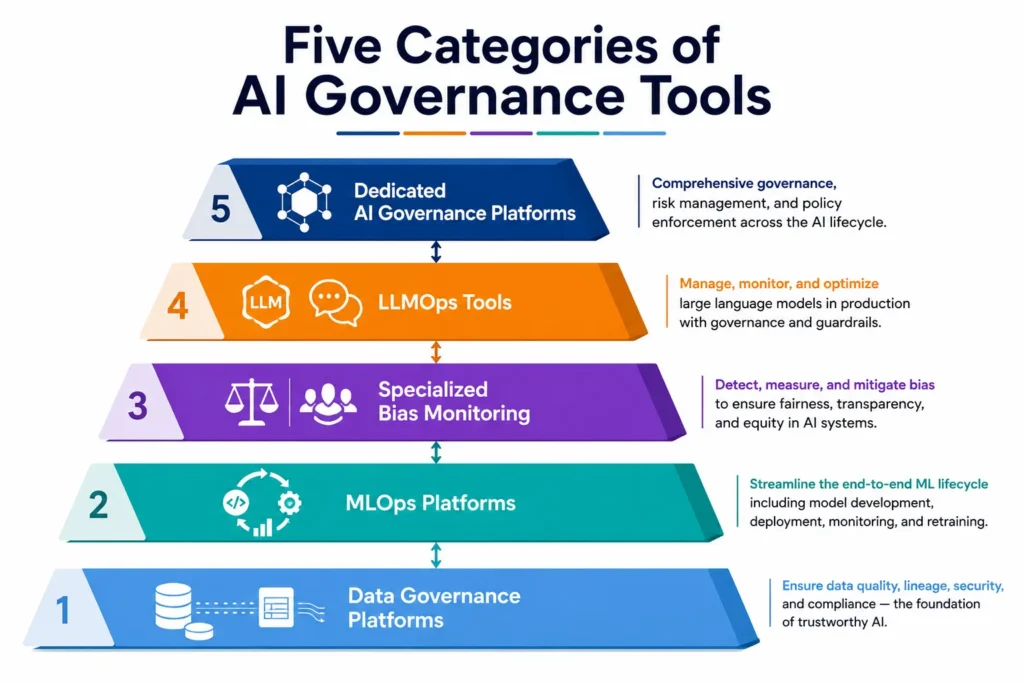

Five Categories of AI Governance Tools and What Each One Does

The AI governance tooling landscape breaks down into five main categories, each addressing a different part of how organizations manage and control their AI systems. Understanding these categories helps you figure out which tools your organization actually needs and where gaps might exist in your current setup. This foundation is essential before we look at specific tool platforms in Section 5.

Category 1: Data Governance Platforms

Data governance platforms form the foundation of everything else because AI systems are only as good as the data they’re trained on. These platforms manage data quality, ensure privacy compliance, and handle regulatory requirements around how data gets collected, stored, and used.

A data governance platform gives you visibility into your data sources and tracks where information flows through your organization. You can identify sensitive personal data, ensure it’s being protected properly, and demonstrate compliance with regulations like GDPR. Without solid data governance, you can’t trust anything your AI systems produce because the underlying training data might contain errors, bias, or privacy violations.

Category 2: MLOps Platforms for Lifecycle Management

MLOps governance refers to the tools that oversee the entire machine learning lifecycle from development through deployment to monitoring. These platforms automate the process of building, testing, and deploying machine learning models while tracking performance and managing versions. Remember: MLOps is the technical backbone; governance is the policy layer on top.

An MLOps platform lets you monitor model performance after deployment and catch when models start degrading. You can roll back to previous versions if something goes wrong, manage multiple models running simultaneously, and document what changes were made and when. Think of MLOps as the operational backbone that keeps your machine learning infrastructure running smoothly.

Category 3: Specialized Bias Monitoring and Transparency Tools

Specialized MLOps tools focus specifically on the governance challenges that keep compliance teams awake at night, including specialized visibility and transparency tools that automate bias monitoring across your systems. These tools automate bias monitoring across your machine learning systems and ensure ethical compliance by detecting when models start making unfair decisions.

These specialized tools continuously scan your models for demographic parity issues, disparate impact, and other forms of algorithmic bias. They generate reports showing where your models might be treating different groups unfairly and flag potential compliance violations before they become legal problems. If you’re serious about responsible AI, you need this layer of oversight separate from basic MLOps monitoring.

Category 4: LLMOps Tools for Large Language Model Risks

LLMOps tools address governance challenges specific to large language models like ChatGPT and Claude. These platforms monitor and detect unethical behavior, bias, and harmful outputs in LLMs specifically because large language models operate differently than traditional machine learning models.

LLMOps tools help you establish AI guardrails that prevent language models from generating harmful content, leaking sensitive information, or behaving in ways that contradict your organization’s values. You can monitor conversations, track what information models are learning, and intervene when behavior drifts into problematic territory. This is critical because language models have their own unique failure modes that traditional MLOps tools don’t catch.

Category 5: Dedicated AI Governance Platforms

Dedicated AI governance platforms tie everything together and enforce policy, ethics, and compliance requirements across your entire AI ecosystem. These platforms orchestrate governance across data governance, MLOps, specialized bias monitoring, and LLMOps to give you a unified view of AI risk across your organization.

A dedicated AI governance platform lets you establish company-wide policies around how AI can be used and deployed. You can enforce those policies consistently, generate compliance reports for regulators, document your governance processes for audits, and demonstrate that your organization takes responsible AI seriously. The key difference between dedicated governance platforms and basic MLOps tools is scope. MLOps focuses on keeping models running. Governance platforms focus on ensuring models operate ethically, fairly, and in compliance with regulations. (We’ll detail the five core capabilities these platforms must have in Section 4.)

The Emerging Frontier: Agentic AI Governance

Looking ahead to 2026, I’m watching an emerging sixth category take shape: agentic AI governance. This represents the most advanced concept in the governance space. Instead of humans manually monitoring every AI system, you use AI itself to govern other AI systems at scale.

Agentic AI governance streamlines governance processes, facilitates continuous monitoring, identifies biases automatically, and ensures legal compliance without requiring constant human intervention. Imagine having an AI governance agent that runs 24/7, checking your models for problems, flagging risks before humans would catch them, and making routine governance decisions based on your established policies.

This technology is still emerging, but organizations serious about scaling AI responsibly are starting to explore it. As your AI footprint grows, relying on manual governance becomes impossible. Agentic AI governance might be the answer to that scaling challenge.

Core Capabilities Every AI Governance Tool Must Have

Not all AI governance tools are created equal. When you’re evaluating platforms to protect your organization, you need to know which capabilities are genuinely essential and which are just nice to have. I’ve seen organizations waste money on tools that look impressive in demos but lack the core features that actually prevent problems.

There are five capability areas that separate serious AI governance tools from superficial solutions. If a platform doesn’t cover all five, you’re leaving your organization exposed to risk. The capabilities below are drawn from real implementations and regulatory requirements not marketing checklists.

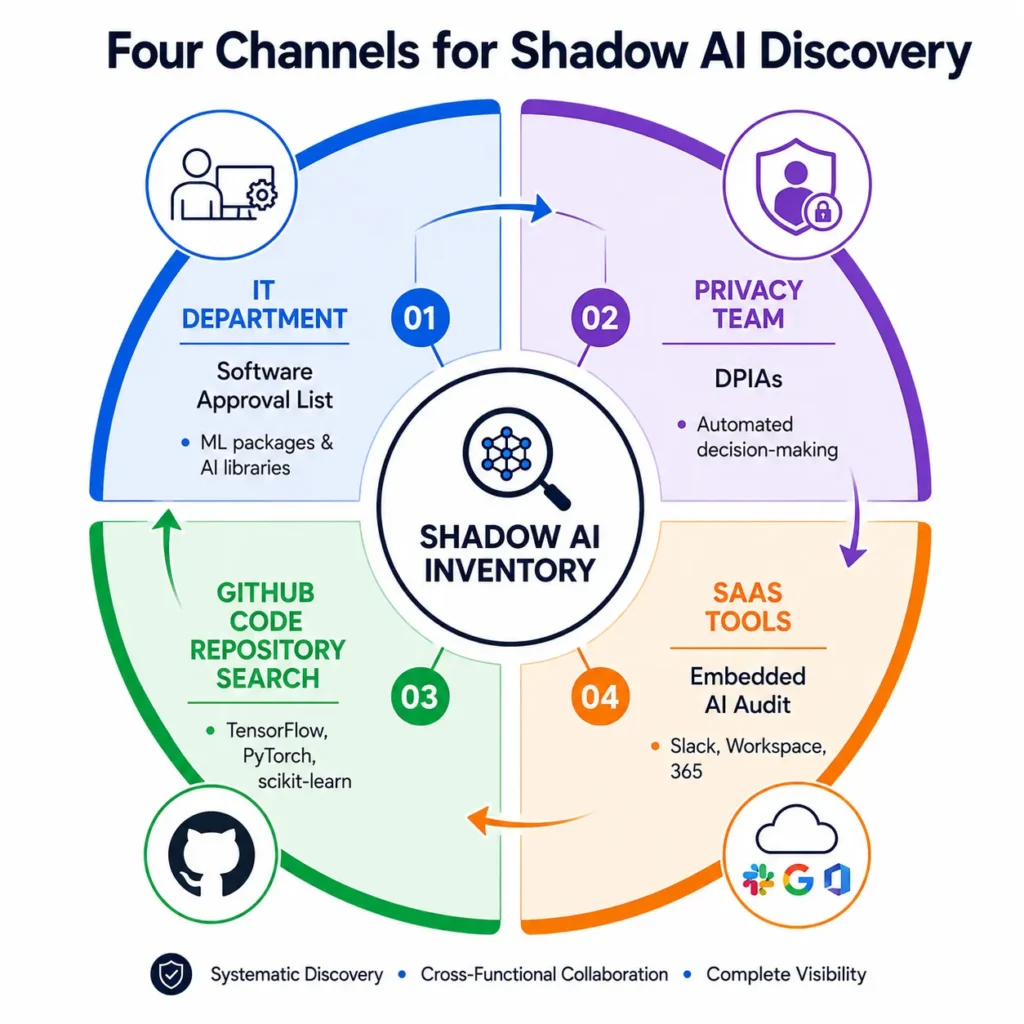

AI Inventory and Model Catalog

Your organization probably has more AI systems running than you realize. Some live in your official data science repositories. Others exist in shadow IT, built by departments that never told the central team they were deploying machine learning models. You can’t govern what you don’t know about, which is why an AI asset inventory is the first thing any governance platform must provide. (We’ll cover how to discover shadow AI in depth in Section 8.)

A proper model registry and AI asset inventory discovers all your models regardless of where they were built. Whether someone deployed a model on Amazon Bedrock, Microsoft Azure, or your internal infrastructure, the governance platform should see it and bring it into a centralized catalog. This vendor-agnostic approach matters because modern organizations don’t live in a single cloud ecosystem anymore.

Once models are registered in your catalog, the platform should support integration across different systems like SAP, Vertex AI, and Databricks. When a developer creates a new model, the governance system should automatically alert your compliance team so they can review it before it goes to production. Models should be certifiable against technical criteria, procurement requirements, and legal compliance standards before your organization approves them for use.

Risk Assessment and Bias Auditing

Organizations need to think about AI risk at two different levels simultaneously. At the macro level, you have strategic risk. Which AI initiatives could cause the most damage if they fail? Which ones affect your most vulnerable customers? At the micro level, you have systemic risk specific to individual use cases. Does this particular model show bias against specific demographic groups? Is it making decisions fairly?

Your AI governance tool must perform AI risk assessment at both levels. It should help you identify which models pose the highest organizational risk and prioritize your governance efforts accordingly. The platform should also include AI bias detection tools that go beyond traditional software testing. You need adversarial testing that specifically tries to break your models in malicious ways. You need unfairness testing that checks whether your models treat different groups fairly.

For language models specifically, the platform must help you define hallucination rate thresholds before models enter production. A hallucination threshold is the maximum acceptable rate at which your language model generates false information. Setting this threshold in advance prevents you from deploying models that will embarrass your organization through false outputs.

Policy Enforcement and AI Guardrails

Having policies is useless if you can’t enforce them automatically. AI governance automation through policy enforcement engines makes compliance a technical requirement rather than a suggestion that people might or might not follow.

Your governance tool should apply runtime guardrails that enforce your policies while models are actually running in production. This means preventing certain types of data from being used in training, blocking models from making decisions outside their approved use cases, and controlling how agentic AI systems behave when they’re making autonomous decisions on your behalf.

A critical policy that most organizations lack is an acceptable use policy that applies to employees. What are workers allowed to do with your AI systems? Are they allowed to feed sensitive customer information into a language model? Can they use AI tools for purposes outside their job function? Without these policies and the automation to enforce them, your employees might inadvertently leak information or use AI tools in ways that create legal liability.

Continuous Monitoring and Drift Detection

Auditing your AI systems once during deployment is not enough. Models change over time as they encounter new data. Performance degrades. Bias that wasn’t visible during testing emerges in production. You need continuous monitoring and real time oversight, not just point in time audits.

Your governance platform should monitor models while they’re actively making decisions and alert you immediately when performance starts to drift. Imagine a healthcare provider deploying a sepsis detection model that identifies patients at risk of severe infection. During testing, the model performed perfectly across all demographic groups. Six months into production, continuous monitoring alerts the compliance team that the model has begun showing bias toward a specific race or gender. Without that automated alert, the hospital might have continued using a biased model for months without realizing the problem.

AI monitoring and oversight should track not just accuracy but also fairness metrics. The tool should flag situations where model behavior is changing in ways that suggest emerging bias or performance degradation. Real-time alerts let your teams respond before problems cause harm.

Audit Trail and Compliance Documentation

When regulators investigate your AI systems, they want to see complete documentation of how you built them, how they performed, and what safeguards you had in place. Manual documentation using spreadsheets doesn’t cut it anymore. EU AI Act compliance, GDPR requirements, and NIST AI RMF guidance all expect systematic, automated documentation.

Your governance tool should automatically create model cards for every AI system. A model card is a simple document that describes what the model does, what data it was trained on, how it performed on key metrics, and what limitations it has. The platform should document data lineage, showing exactly where your training data came from and how it flowed through your systems.

AI compliance reporting should happen automatically rather than requiring your team to manually compile information from scattered systems. Approval workflows should create an audit trail showing who approved each model, what concerns were raised, and how those concerns were addressed. When a regulator asks how you governed a particular model, you should be able to pull comprehensive documentation showing your entire process.

This automated approach replaces the spreadsheet chaos that most organizations currently suffer through. Instead of hunting through email chains and shared drives for evidence of your governance process, everything is systematically documented and readily available for audits.

15 Best AI Governance Tools for 2026 (Compared by Use Case)

Finding the right AI governance platform depends on your organization’s maturity level, budget, and which AI systems you’re trying to govern. I’ve evaluated dozens of options and narrowed it down to 15 platforms that actually deliver on their governance promises. Each one solves different problems, and I’ll be honest about strengths and limitations rather than just listing features. (If you’re unsure of your maturity level, see Section 9 first.)

Dedicated AI Governance Platforms

These platforms exist solely to govern AI systems. They’re built from the ground up for compliance, policy enforcement, and risk management across your entire AI ecosystem.

Credo AI

Credo AI specializes in responsible AI governance through a modular approach that lets you customize governance workflows to match your organization’s needs. The platform excels at creating audit trails and documentation that regulatory bodies actually want to see.

Best fit: Organizations needing strong documentation and compliance reporting for regulated industries like finance and healthcare. The modular design means you pay for what you use rather than bloated enterprise packages.

Key differentiator: Credo AI’s focus on explainability means you can actually understand why your AI systems are making decisions, which matters for customer trust and regulatory defense.

OneTrust AI Governance

OneTrust approaches AI governance as an extension of broader trust and compliance management. If your organization already uses OneTrust for privacy and security, adding their AI governance layer creates seamless integration across all compliance functions.

Best fit: Large enterprises with existing OneTrust investments who want unified governance across privacy, security, and AI risk. The platform handles both traditional machine learning and generative AI models.

Key differentiator: OneTrust’s integration with existing enterprise systems means less data entry and fewer disconnected tools. However, this strength becomes a weakness if you’re not already in their ecosystem.

IBM watsonx.governance

IBM watsonx.governance stands out because it governs both traditional machine learning and foundation models regardless of where they were built. Whether your models run on AWS, Azure, Google Cloud, or your own infrastructure, the platform sees them all.

Best fit: Large organizations with hybrid cloud setups that want centralized governance across multiple vendors. IBM’s ability to handle both classical ML and generative AI makes this a future-proof choice as your AI footprint evolves.

Key differentiator: The vendor-agnostic architecture means you’re not locked into IBM’s ecosystem. You can deploy on-premise, in public cloud, or private cloud based on your security requirements.

Holistic AI

Holistic AI takes a scientific approach to responsible AI, grounding its governance methodology in academic research and documented best practices. The platform focuses heavily on fairness assessment and bias quantification.

Best fit: Organizations with strong data science teams who understand machine learning deeply and want technically rigorous governance rather than checkbox compliance. Research institutions and advanced analytics teams gravitate toward this platform.

Key differentiator: Holistic AI’s algorithms for measuring fairness are more sophisticated than competitors, but this sophistication requires technical expertise to interpret correctly.

Monitaur

Monitaur focuses on continuous monitoring of AI systems in production, tracking model performance and detecting drift in real time. The platform integrates tightly with machine learning pipelines to catch problems early.

Best fit: Organizations with many production models that need automated monitoring and alerting. Data science teams love Monitaur because it fits naturally into their existing workflows.

Key differentiator: Monitaur excels at finding performance degradation quickly, but it’s lighter on policy enforcement compared to enterprise governance platforms.

Lumenova AI

Lumenova AI takes a practical approach to responsible AI by focusing on the specific risks that matter to your business. Rather than generic governance checklists, Lumenova helps you identify which AI systems pose the highest risk to your organization.

Best fit: Mid-market organizations wanting governance that feels tailored to their actual needs rather than one-size-fits-all templates. The platform’s risk prioritization helps you focus limited compliance resources where they matter most.

Key differentiator: Lumenova’s business-focused approach appeals to organizations tired of purely technical governance frameworks. However, this means it requires closer collaboration between technical and business teams.

Enterprise MLOps Platforms With Governance Features

These platforms started as tools for managing machine learning operations but have expanded to include governance capabilities. They work well if you want governance embedded in your existing ML infrastructure.

ModelOp Center

ModelOp Center manages the complete AI model lifecycle while embedding governance throughout. The platform automates bias testing, performance monitoring, and compliance documentation as part of normal model operations.

Best fit: Organizations with mature data science teams who want governance baked into their MLOps workflows rather than bolted on as a separate tool. If your team already knows ModelOp, adding governance is a natural next step.

Key differentiator: ModelOp’s strength is governance during the development and testing phase. Performance monitoring after deployment is solid but not as deep as specialized monitoring tools.

Collibra AI Governance

Collibra organizes AI governance around use cases and tracks them through ideation, development, and monitoring stages. When a new use case gets registered, the platform automatically triggers four different governance assessments without requiring manual setup.

Best fit: Large organizations with many AI initiatives spread across different teams. Collibra’s integration with SAP, Vertex AI, and Databricks means it plays well with enterprise data infrastructure.

Key differentiator: Collibra’s automatic assessment triggering saves enormous time for compliance teams. The structured workflow guides teams through governance requirements they might otherwise skip.

Fiddler AI

Fiddler specializes in AI model monitoring and explainability, helping you understand why models make specific decisions. The platform generates detailed explanations for individual predictions and tracks model behavior over time.

Best fit: Organizations that need to explain AI decisions to customers or regulators. If your business involves credit decisions, hiring recommendations, or other high-stakes AI use cases, Fiddler’s explainability tools are invaluable.

Key differentiator: Fiddler’s model explanation capabilities are genuinely advanced, but the platform is lighter on broader governance features like policy enforcement and risk assessment.

ServiceNow AI Control Tower

ServiceNow AI Control Tower integrates AI governance into the broader ServiceNow platform, connecting governance workflows with IT operations, change management, and incident response. This means policy violations can automatically trigger change requests and audits.

Best fit: Organizations heavily invested in ServiceNow for IT and business operations. The platform shines when governance decisions need to flow into other operational processes.

Key differentiator: ServiceNow’s strength is operational integration, but if you’re not already using ServiceNow, the learning curve and implementation complexity might outweigh the benefits.

Specialized LLMOps and Monitoring Tools

These platforms focus specifically on monitoring and governing large language models and generative AI systems. They’re lighter weight than enterprise governance platforms but deeper on LLM-specific risks.

Arthur AI

Arthur AI monitors AI models in production with a focus on detecting performance degradation, drift, and emerging bias. The platform provides model performance insights and automated alerting when models start behaving differently than expected.

Best fit: Teams running production machine learning and language models who need reliable performance monitoring without enterprise governance overhead. Data science teams value Arthur’s technical depth and ease of integration.

Key differentiator: Arthur’s performance monitoring is technically sophisticated, but it lacks the broader governance features needed for regulatory compliance or enterprise policy enforcement.

Weights and Biases

Weights and Biases helps machine learning teams track experiments, manage models, and monitor performance in production. The platform has expanded to include responsible AI features like bias detection and fairness metrics.

Best fit: Teams already using Weights and Biases for experiment tracking who want to layer governance onto their existing workflows. The platform is popular in research and ML-heavy organizations.

Key differentiator: Weights and Biases excels at tracking model development and experimentation, making it valuable for understanding how models evolved. However, governance and compliance features feel like add-ons rather than core functionality.

Open-Source and Budget-Friendly Options

Organizations with limited budgets have options too. Open source AI governance tools provide basic functionality without licensing costs, though they require more hands-on maintenance.

Guardrails AI

Guardrails AI is an open-source framework that lets you define and enforce rules around AI system behavior. You can create guardrails that prevent language models from generating harmful content, leaking sensitive information, or behaving outside acceptable parameters.

Best fit: Teams with technical expertise who can customize and maintain open source tools. Organizations building internal AI governance processes benefit from Guardrails AI’s flexibility and control.

Key differentiator: Guardrails AI gives you complete control over your governance rules and no licensing costs, but implementation requires Python expertise and ongoing maintenance.

Alibi Explain

Alibi Explain is an open-source Python library that provides model explainability and interpretability. You can understand how models make predictions and detect whether model behavior has changed over time.

Best fit: Data science teams needing explainability functionality without enterprise licensing. The library integrates into Python-based ML workflows naturally.

Key differentiator: Alibi Explain is technically excellent for its specific purpose but provides no policy enforcement or compliance reporting capabilities.

WhyLabs

WhyLabs sits between open source and commercial platforms. The core open-source monitoring library is free, but the commercial WhyLabs platform adds governance, compliance reporting, and automated alerting on top.

Best fit: Organizations wanting to start with open source monitoring and scale to enterprise governance as they mature. You can begin free and upgrade when your governance needs grow.

Key differentiator: WhyLabs’ hybrid approach means you’re not locked into expensive enterprise contracts from day one, but scaling to their commercial platform requires additional investment.

Choosing Your Platform

The right AI governance tool depends on where your organization sits today. Small teams with limited budgets start with open-source options. Growing organizations with multiple AI systems gravitate toward dedicated governance platforms. Enterprises with existing tool investments often extend those platforms with governance add-ons.

I recommend starting by identifying your governance gaps from the core capabilities section (Section 4), then matching your maturity level from Section 9. After that, match those gaps to the platform categories and specific tools listed here. Most importantly, avoid the mistake of buying a platform that covers only one capability area. Real governance requires monitoring, policy enforcement, documentation, and risk assessment working together. The best platform for your organization integrates those capabilities seamlessly.Your AI governance tools for compliance

AI Governance Tools for Compliance Workflows: EU AI Act, NIST and ISO 42001

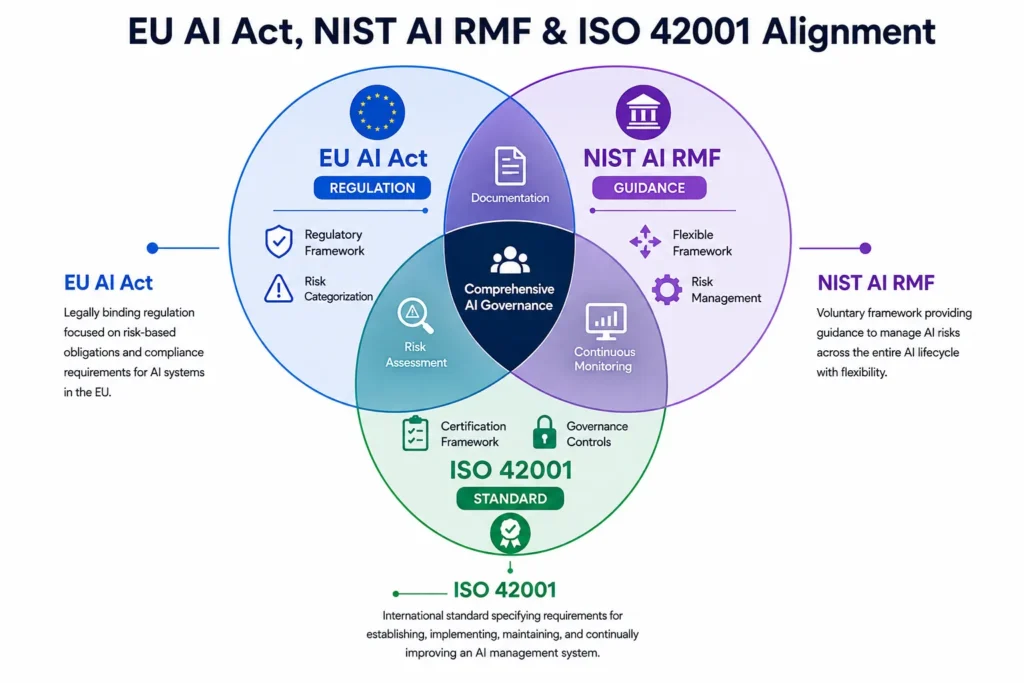

Regulatory requirements for AI are tightening globally, and your governance tool needs to help you meet these standards without drowning your team in manual documentation. Three frameworks now dominate the compliance landscape: the EU AI Act, the NIST AI Risk Management Framework, and ISO 42001. Each one approaches governance differently, but the right tools automate alignment across all three simultaneously.

EU AI Act Compliance Requirements

The EU AI Act is now being enforced, and organizations operating in Europe or serving European customers must comply. The law categorizes AI systems into risk tiers, and your compliance obligations depend on which tier your models fall into.

The EU AI Act creates four risk categories. Unacceptable risk systems like social credit scoring are banned entirely. High-risk systems like hiring AI or credit decisions require extensive documentation, bias testing, and human oversight before deployment. Limited-risk systems need basic transparency measures. Minimal-risk systems have no specific requirements beyond general good practice.

Your AI governance tools for compliance workflows need to automatically categorize your models into these risk tiers and generate the documentation the EU AI Act requires—without requiring your team to manually track each regulation. When you deploy a high-risk model, the platform should trigger mandatory bias auditing, performance monitoring, and record-keeping requirements without requiring your team to manually remember what’s needed.

The EU AI Act imposes significant financial penalties for non-compliance, with fines reaching millions of euros. This makes automated compliance documentation essential rather than optional. A good AI compliance tool creates audit ready records showing exactly what testing you performed, what results you found, and what safeguards you implemented. When regulators investigate, you can pull this documentation instantly instead of scrambling to reconstruct your process.

NIST AI Risk Management Framework Alignment

The NIST AI Risk Management Framework provides a structured approach to managing AI-related risks across your organization. Unlike the EU AI Act which is prescriptive, NIST AI RMF is a flexible framework that applies to any organization regardless of geography.

NIST AI RMF has four core functions that work together. Govern establishes your AI governance structure and policies. Map identifies which AI systems you have and what risks they present. Measure determines whether your systems are performing fairly and reliably. Manage implements controls to address identified risks and prevent problems.

Your AI governance framework should support all four NIST functions through its core capabilities. The Govern function needs policy enforcement and acceptable use policies. Map requires comprehensive model inventory and risk assessment. Measure depends on continuous monitoring and bias auditing. Manage needs documented controls and audit trails showing what actions you took to address risks.

Many organizations use NIST AI RMF as their governance baseline before formal regulation arrives in their jurisdiction. It’s widely recognized as best practice, and demonstrating NIST alignment gives you credibility with regulators, customers, and auditors. Your governance tool should help you map your policies to specific NIST functions and document that alignment in your compliance reporting.

ISO 42001 and the Path to Certification

ISO 42001 is the international standard for AI management systems, and organizations pursuing formal certification follow a structured implementation path. Unlike NIST which is a framework, ISO 42001 is a certifiable standard with specific requirements that third-party auditors verify.

ISO 42001 implementation happens across four distinct phases. In the first phase, you define the scope of your AI management system and identify which parts of your organization it covers. The second phase involves stakeholder analysis and understanding what different groups need from your governance. The third phase is where you perform risk treatment planning and design controls. The final phase leads to your certification audit.

The standard includes 38 specific controls from Annex A, but organizations don’t implement all of them. Instead, you evaluate which controls apply to your specific risk profile and stakeholder landscape. You then create a Statement of Applicability documenting which controls you’re using and why. This document becomes a central part of your certification audit.

Implementing ISO 42001 without AI governance tools is incredibly difficult because the standard requires documented evidence of your controls, risk assessment, stakeholder consultation, and monitoring activities. Tools that support ISO 42001 alignment automate much of this documentation. They track which controls you’ve implemented, gather evidence of compliance, and generate reports auditors need to see.

Secondary Compliance Contexts

Beyond these three primary frameworks, GDPR compliance remains critical for organizations handling European personal data. Your AI governance tools need to document how you’re protecting training data privacy and ensuring models don’t leak personal information.

The AI Bill of Rights, established by the White House, provides guidance on responsible AI practices in the United States. While not legally binding, it’s becoming an expectation for government contractors and organizations doing business with federal agencies. Your governance tools should help you align with these principles even if they’re not yet formal legal requirements.

Choosing Tools for Regulatory Alignment

The best compliance-ready AI governance tools map features to multiple frameworks simultaneously. When you set up a governance workflow, the platform should automatically align your processes with EU AI Act requirements, NIST functions, and ISO 42001 controls. This eliminates the need to design separate governance processes for each regulation.

Look for tools that generate audit-ready documentation automatically rather than requiring manual report compilation. Your governance platform should maintain records of model categorization, risk assessments, control implementation, and monitoring results without requiring your team to manually update spreadsheets. When an auditor asks for evidence of your governance process, you should be able to generate comprehensive documentation instantly from your governance system.

Shadow AI Is Already in Your Organization (Here Is How to Find It)

Shadow AI represents one of the most overlooked governance challenges organizations face today. Most companies with strong central governance still miss shadow AI systems because they weren’t looking in the right places. The four discovery channels detailed in Section 8 are essential for any organization serious about comprehensive governance.

The problem is more serious than you think. Every undocumented AI system represents a potential compliance violation, a source of unmanaged bias, and a risk that regulators will discover before you do. Finding shadow AI requires a systematic approach using four discovery channels that most organizations never check.

Four Practical Ways to Discover Shadow AI

Start by contacting your IT department and requesting the software approval list. This list shows every application and tool installed across your organization. Look specifically for entries containing machine learning packages, AI libraries, or analytics platforms. Many shadow AI systems hide in commercial tools rather than custom built systems.

Your privacy team is another goldmine of information. The privacy team conducts Data Protection Impact Assessments, or DPIAs, when projects handle sensitive personal data. These assessments often identify AI systems because privacy professionals are trained to spot automated decision making. Ask your privacy team to share DPIAs completed in the last two years and review them specifically for AI references.

Search your GitHub repositories if your organization uses version control systems. Machine learning projects leave traces in code repositories through imported libraries like TensorFlow, PyTorch, or scikit-learn. A simple search for these library names across all repositories reveals development work that may have graduated to production without formal governance review.

Finally, audit embedded AI in everyday commercial tools. Slack uses AI for features like message search and workflow automation. Google Workspace includes AI powered writing suggestions and smart replies. Microsoft 365 has Copilot integrated into Word, Excel, and Outlook. These tools are processing your organizational data through AI systems that you didn’t build and probably didn’t formally approve.

The Third Party Vendor Risk Nobody Addresses

Shadow AI detection gets even more critical when you consider third-party vendors. Your organization might have excellent internal AI governance, but if you’re sending data to external partners without understanding how those partners use AI, you’re exposed to massive risk.

Vendors frequently use AI to process your data without explicit notification. A vendor managing your customer data might use machine learning to detect fraud, predict churn, or optimize operations. If that vendor has poor AI governance or uses your data to train models they sell to competitors, your organization bears the risk even though you don’t control the system.

AI supply chain risk is routinely overlooked in governance programs. Organizations focus on securing their internal AI ecosystem but ignore the AI governance practices of vendors they rely on. You need to ask vendors directly about their AI systems, request documentation of their governance practices, and include AI governance requirements in vendor contracts going forward.

Two Separate Documents for Complete Governance

Once you’ve discovered all your shadow AI systems and identified vendor risks, you need two linked but separate documents to manage them properly. The AI asset inventory lists every AI system you’ve discovered, including shadow AI and vendor systems. For each system, document what it does, where it runs, who owns it, and what data it uses.

The risk register is a separate document linked to your AI asset inventory. For each system listed in your inventory, the risk register documents specific risks associated with that system, estimates their likelihood, describes mitigation plans you’ve implemented, and calculates residual risk after mitigation. This separation between inventory and risk management gives you flexibility to manage systems at different governance maturity levels.

A system listed in your inventory might represent minimal risk and require only basic monitoring. Another system might be high-risk and require intensive governance, bias auditing, and continuous oversight. The risk register lets you allocate governance resources based on actual risk rather than treating all systems identically.

The hardest part about shadow AI is admitting you probably have it. Most organizations discover shadow AI when they start looking seriously, and the number is usually larger than expected. The good news is that discovering shadow AI gives you a chance to bring it under governance before it causes harm. Ignoring shadow AI doesn’t make it go away. It just means the risk is happening without your knowledge or control.

Shadow AI Is Already in Your Organization (Here Is How to Find It)

Shadow AI exists inside most organizations without anyone officially knowing about it. These are AI systems operating in the background that nobody formally approved, documented, or included in your governance process. I’ve seen organizations with strong central governance completely miss shadow AI because they weren’t looking in the right places.

The problem is more serious than you think. Every undocumented AI system represents a potential compliance violation, a source of unmanaged bias, and a risk that regulators will discover before you do. Finding shadow AI requires a systematic approach using four discovery channels that most organizations never check.

Four Practical Ways to Discover Shadow AI

Start by contacting your IT department and requesting the software approval list. This list shows every application and tool installed across your organization. Look specifically for entries containing machine learning packages, AI libraries, or analytics platforms. Many shadow AI systems hide in commercial tools rather than custom built systems.

Your privacy team is another goldmine of information. Privacy professionals conduct Data Protection Impact Assessments, or DPIAs, when projects handle sensitive personal data. These assessments often identify AI systems because privacy experts are trained to spot automated decision making. Ask your privacy team to share DPIAs completed in the last two years and review them specifically for AI references.

Search your GitHub repositories if your organization uses version control systems. Machine learning projects leave traces in code repositories through imported libraries like TensorFlow, PyTorch, or scikit-learn. A simple search for these library names across all repositories reveals development work that may have graduated to production without formal governance review.

Finally, audit embedded AI in everyday commercial tools. Slack uses AI for message search and workflow automation. Google Workspace includes AI powered writing suggestions and smart replies. Microsoft 365 has Copilot integrated into Word, Excel, and Outlook. These tools are processing your organizational data through AI systems that you didn’t build and probably didn’t formally approve.

The Third Party Vendor Risk Nobody Addresses

Shadow AI detection becomes even more critical when you consider third-party vendors. Your organization might have excellent internal AI governance, but if you’re sending data to external partners without understanding how those partners use AI, you’re exposed to massive risk.

Vendors frequently use AI to process your data without explicit notification. A vendor managing your customer data might use machine learning to detect fraud, predict churn, or optimize operations. If that vendor has poor AI governance or uses your data to train models they sell to competitors, your organization bears the risk even though you don’t control the system.

AI supply chain risk is routinely overlooked in governance programs. Organizations focus on securing their internal AI ecosystem but ignore the AI governance practices of vendors they rely on. You need to ask vendors directly about their AI systems, request documentation of their governance practices, and include AI governance requirements in vendor contracts going forward.

Two Separate Documents for Complete Governance

Once you’ve discovered all your shadow AI systems and identified vendor risks, you need two linked but separate documents to manage them properly. The AI asset inventory lists every AI system you’ve discovered, including shadow AI and vendor systems. For each system, document what it does, where it runs, who owns it, and what data it uses.

The risk register is a separate document linked to your AI asset inventory. For each system listed in your inventory, the risk register documents specific risks associated with that system, estimates their likelihood, describes mitigation plans you’ve implemented, and calculates residual risk after mitigation. This separation between inventory and risk management gives you flexibility to manage systems at different governance maturity levels.

A system listed in your inventory might represent minimal risk and require only basic monitoring. Another system might be high risk and require intensive governance, bias auditing, and continuous oversight. The risk register lets you allocate governance resources based on actual risk rather than treating all systems identically.

The hardest part about shadow AI is admitting you probably have it. Most organizations discover shadow AI when they start looking seriously, and the number is usually larger than expected. The good news is that discovering shadow AI gives you a chance to bring it under governance before it causes harm. Ignoring shadow AI doesn’t make it go away. It just means the risk is happening without your knowledge or control.

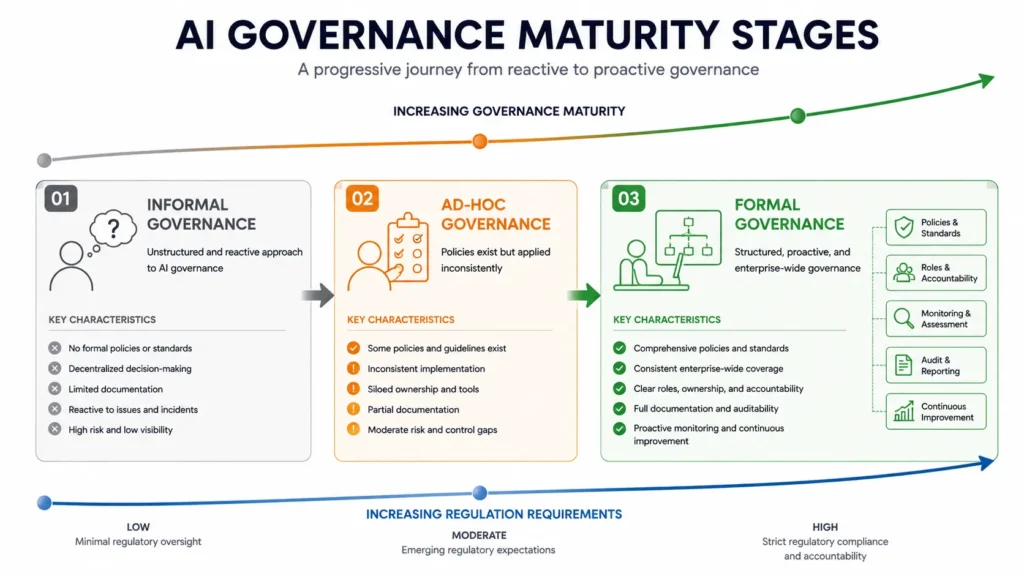

Which Stage of AI Governance Are You At? Three Maturity Levels Explained

Understanding where your organization stands in AI governance maturity helps you pick the right tools and avoid overspending on capabilities you don’t yet need. Most organizations underestimate their governance needs, but some also buy enterprise platforms when they’re still at an early stage. I’ve seen both mistakes waste money and frustrate teams.

Organizations progress through three distinct maturity stages. Knowing which stage you’re in right now is the first step toward building sustainable governance that scales with your AI footprint.

Informal Governance: Where Most Organizations Still Are

Informal governance relies on organizational values and principles without any formal structure. You probably have an internal review committee or an ethics board that looks at AI projects. People make governance decisions based on what feels right rather than following documented processes.

Informal governance often works fine for early-stage startups building a few experimental AI systems. The team is small, communication is direct, and decisions happen quickly. But the moment your organization scales beyond a handful of projects, informal governance breaks down.

Here’s why informal governance fails: There’s no consistency. One reviewer might approve a model that another reviewer would reject. Decisions aren’t documented, so when regulators ask how you made a governance decision, you can’t explain your reasoning. New team members don’t know what’s expected because the process lives only in people’s heads.

Informal governance is completely insufficient if you’re in a regulated industry, handling customer data, or subject to the EU AI Act. You also can’t scale AI responsibly when governance depends on informal committees. As your AI footprint grows, informal governance becomes a bottleneck that slows down every project.

If you’re in informal governance right now, your next step isn’t buying an expensive enterprise platform. Start documenting your current informal process and move toward ad-hoc governance with lightweight tools like open-source frameworks or basic SaaS platforms.

Ad-Hoc Governance: Specific Policies Without Full Coverage

Ad-hoc governance has established policies and procedures, usually created in response to a specific risk or incident. Your organization decided to govern AI because something went wrong or because a regulator asked questions. Now you have documented processes, but those processes only cover certain types of AI systems or specific risk scenarios.

Many mid-market organizations sit in this stage right now. You have policies for hiring AI and credit decision models because those use cases triggered governance attention. But you haven’t systematized governance across your entire AI portfolio. Models used internally for analytics might still operate without formal oversight.

Ad-hoc governance is better than informal, but it’s still reactive rather than proactive. You’re responding to known risks instead of identifying risks systematically across all AI systems. This approach leaves blind spots that regulators or your competitors might exploit before you do.

The gap in ad-hoc governance is coverage. You need a more comprehensive framework that governs all AI systems, not just the ones you’re worried about. Moving from ad-hoc to formal governance requires purpose-built AI governance platforms that provide consistent oversight across your entire portfolio.

Purpose-built specialized platforms work well at this stage because you’re still establishing governance workflows. Lightweight platforms let you implement governance without overengineering the process. As your governance matures, you can layer in more sophisticated capabilities.

Formal Governance: The Standard Regulators Now Expect

Formal governance features a comprehensive framework aligned with laws and regulations. Your organization conducts mandatory risk assessments before deploying any AI system. You perform ethical reviews. You have structured oversight with clear role assignments and documented accountability.

Formal governance is what regulators expect to see, especially as AI laws become more complex globally. If you’re operating under the EU AI Act, ISO 42001 certification requirements, or NIST AI Risk Management Framework guidance, formal governance is now non-negotiable. Even organizations without formal legal requirements are moving toward formal governance because customers and investors expect it.

Formal governance requires enterprise-grade AI safety and governance tools that provide full lifecycle coverage. You need platforms that track models from development through deployment through retirement. You need continuous monitoring, audit trails, policy enforcement, and automated compliance reporting.

Building formal governance takes time and investment. Start with a centralized approach where your governance team controls all assessments and reviews. This ensures consistency while you establish baseline processes. As your program matures and you have strong governance practices documented, transition to federated governance where business units have more autonomy but still follow your governance framework.

The transition from informal through ad-hoc to formal governance doesn’t happen overnight. Most organizations take one to two years to establish formal governance completely. But regulators expect this progression, and customers increasingly demand it. The sooner you acknowledge your current stage and start moving toward formal governance, the better positioned you’ll be when regulations tighten further.

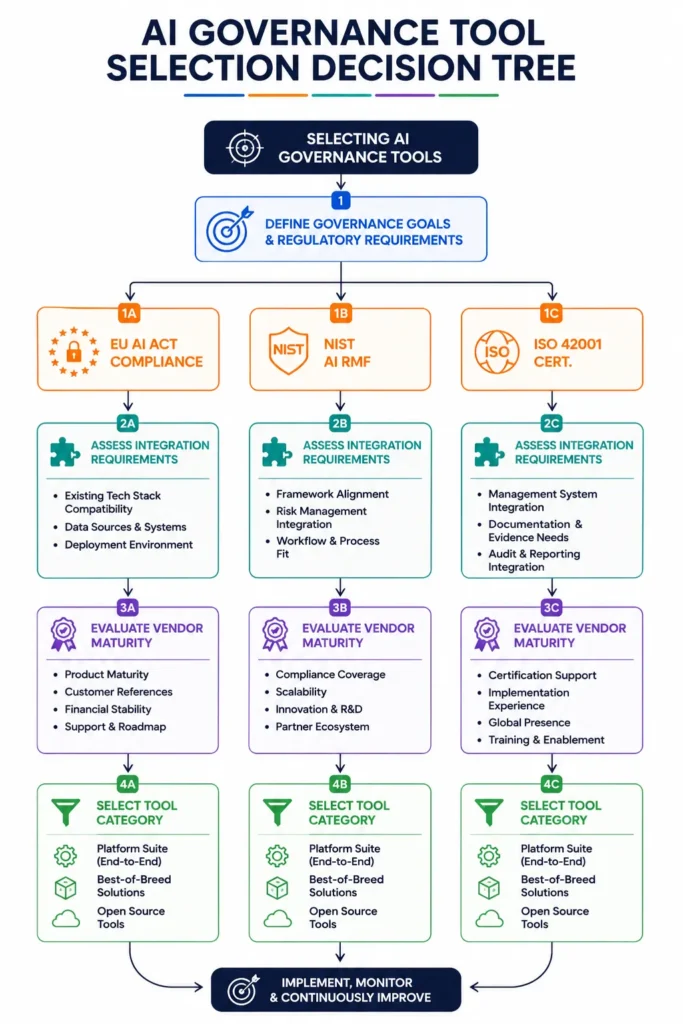

How to Choose AI Governance Tools That Actually Fit Your Organization

Picking the right AI governance tool is different from picking other enterprise software. You’re not just buying features. You’re building the foundation for how your organization will manage risk across all AI systems for years to come. A bad choice now creates technical debt and frustration that compounds over time.

I’ve watched organizations make expensive mistakes by selecting tools based on vendor marketing rather than actual governance needs. The solution is to follow a systematic evaluation process that starts with understanding your specific problems before looking at any platforms.

Define Your Governance Goals and Regulatory Requirements First

The biggest mistake I see organizations make is selecting a tool before defining what they’re trying to accomplish. You need to understand your governance goals and regulatory requirements first, then find tools that fit those needs rather than the other way around.

Are you subject to the EU AI Act? That changes everything about what your governance platform needs to do. Organizations operating in Europe or serving European customers have mandatory compliance requirements that demand specific capabilities like risk categorization, bias testing documentation, and audit trails. US organizations following NIST AI RMF voluntarily have different requirements than organizations pursuing ISO 42001 certification.

Your industry matters too. Healthcare organizations handling patient data have different governance obligations than financial institutions making credit decisions or insurance companies assessing risk. Regulated industries have sector specific audit standards and data residency requirements that your governance tool must support.

Start by clearly defining the specific problems you’re trying to solve. Are you discovering shadow AI systems running without oversight? Are you trying to prove compliance to regulators? Do you need to prevent biased hiring algorithms from entering production? Understanding your actual governance problem reveals who will be affected and what your risk matrix requires.

Once you understand your governance goals, document your regulatory obligations. This becomes the foundation for evaluating which platforms can actually meet your requirements versus which ones just look impressive in demos.

What Are the Best Government-Compliant Tools for Secure AI Development

If you’re in public sector or regulated industries, government compliance becomes a primary selection criterion. Government-compliant AI governance tools must meet specific requirements around data handling, security certifications, and audit capabilities.

FedRAMP authorization matters for organizations serving US federal agencies. Your governance platform needs to operate in FedRAMP-authorized cloud environments or support on-premise deployment. Data residency requirements mean your AI system data can’t flow to external servers if you’re handling sensitive information.

Regulated industries like healthcare and finance have sector-specific audit standards your governance tool must support. A healthcare governance tool needs to generate documentation that satisfies HIPAA audit requirements. Financial services governance platforms need to produce records that satisfy banking regulators.

Your organizational role also matters for choosing compliant tools. Are you an AI provider building and deploying models for customers? An AI producer using models within your organization? Or an AI user deploying vendor-provided AI systems? Each role requires different governance controls and platform capabilities.

Ask vendors directly about their government compliance certifications, data residency options, and audit capabilities before evaluating further. This filtering step eliminates platforms that can’t meet your regulatory requirements regardless of how good they look otherwise.

Integration Requirements and Stack Compatibility

Your governance platform needs to integrate seamlessly with the ML infrastructure you’re already using. If your data science team builds models on Amazon SageMaker, your governance tool needs to see and monitor those models. If you use Azure ML, the platform must integrate there. Same for Google Vertex AI, Databricks, or any other major ML platform.

Many organizations have models spread across multiple cloud providers and internal infrastructure. Your governance tool must be vendor-agnostic and see AI systems regardless of where they were built. A platform that only works with one cloud provider creates blind spots and forces you to maintain multiple governance systems.

Check API availability for custom integrations. Some governance platforms provide robust APIs that let you connect to proprietary systems or specialized ML tools. Others have limited integration capabilities that force you to choose between the governance tool and your existing ML infrastructure.

Integration depth matters too. A shallow API integration that only reads model metadata isn’t the same as deep integration that gives you monitoring, policy enforcement, and automated documentation. Evaluate whether the platform’s integration approach matches your actual technical setup before committing.

Automation Depth, Usability and Vendor Maturity

Strong AI governance tools automate repetitive tasks that would otherwise consume enormous amounts of your team’s time. Automated bias testing, continuous monitoring, compliance report generation, and policy enforcement are capabilities that separate good tools from great ones.

Usability matters because your governance tool needs to be used by people across different functions. Data scientists need to understand what’s required of them. Compliance teams need to generate reports without technical expertise. Business stakeholders need to make governance decisions based on clear information. If the tool is too technical or confusing, people will work around it rather than with it.

Vendor maturity indicates whether the company will be around in three years and whether the platform will continue receiving updates and improvements. Established vendors with multiple customers and steady revenue have more staying power than early stage startups with one or two enterprise customers. This matters because AI governance is too critical to build on unstable foundations.

Ask for references from similar organizations using the platform. Talk to current customers about how the platform performs in production, how responsive the vendor is to support requests, and whether the product roadmap aligns with emerging governance requirements.

Building Your AI Governance Program From Scratch: The First Five Steps

Starting an AI governance program feels overwhelming if you’ve never done it before. You’re juggling frameworks like NIST AI RMF and ISO 42001, trying to figure out who should be involved, and wondering whether you need a tool before you even have a process. I’ve watched organizations get paralyzed by perfectionism when what they actually need is to start taking action.

The secret to building a successful governance program is to focus on execution over perfection. You don’t need a perfect framework or a fancy tool to begin. You need a clear process for finding AI systems, understanding their risks, and keeping them under control. Everything else builds from that foundation.

Step 1: Build the AI Inventory Before You Do Anything Else

Your first task is creating a complete list of every AI system your organization has. This includes systems in production, systems under development, and systems being planned. You’re also hunting for shadow AI using the four discovery channels I covered in Section 7.

Start with your IT department’s software approval list and look for machine learning packages. Talk to your privacy team about AI systems mentioned in Data Protection Impact Assessments. Search your GitHub repositories for TensorFlow, PyTorch, and scikit-learn libraries. Audit commercial tools like Slack, Google Workspace, and Microsoft 365 to see what AI features your organization is actually using.

Your AI asset inventory should list each system with basic information: what it does, where it runs, who owns it, what data it uses, and when it was deployed. Don’t try to make the inventory perfect. Aim for good enough to work with. You’ll refine it as you learn more.

Create a separate risk register linked to your inventory. The inventory lists systems. The risk register documents specific risks for each system, estimates their likelihood, describes your mitigation plans, and calculates residual risk. This separation gives you flexibility to manage systems at different governance maturity levels without forcing everything into one spreadsheet.

Step 2: Triage by Risk Level and Focus Resources Where It Matters Most

Not all AI systems pose equal risk. You don’t have the resources to govern everything equally, so you need to identify which systems matter most and focus there first.

Categorize each system in your inventory as high, medium, or low risk using a consistent rubric. High-risk systems include hiring and HR applications that could cause discrimination, customer-facing applications that directly affect users, and systems with significant financial implications. A low-risk system might be internal analytics that helps your team understand trends but doesn’t drive major decisions.

Apply your risk rubric consistently across all systems. Don’t let politics or personal opinions drive the categorization. Let the actual risk determine where you allocate governance resources. This approach prevents you from spending months governing a low-risk internal tool while ignoring a high-risk customer-facing model.

Once you’ve triaged your systems, start governance activities with the high-risk tier. Get those systems properly assessed, monitored, and documented. Then move to medium-risk systems. Low-risk systems get basic oversight but don’t consume enormous amounts of your governance team’s time.

Step 3: Form the Governance Committee and Assign Clear Ownership

Your governance program needs a dedicated committee with representatives from across your organization. Include Legal, Compliance, IT, Privacy, and relevant business stakeholders from departments using AI systems. Make sure someone from senior leadership is involved because governance programs that lack leadership buy-in consistently run out of resources before achieving meaningful results.

Assign clear ownership before your first meeting. Who approves new AI use cases? Who owns the inventory updates? Who conducts risk assessments? Who monitors systems in production? Without clear ownership, governance becomes everyone’s responsibility and nobody’s accountability.

Start with a centralized approach where your governance committee controls all assessments and reviews. This ensures consistency while you’re establishing baseline processes and building confidence in your governance methodology. As your program matures and you have strong governance practices documented, you can gradually delegate authority to business units while maintaining central oversight.

Make sure your CEO has visibility into which AI systems your organization is using and what risks they pose. This executive awareness prevents the board from discovering governance problems during an audit that your organization should have caught internally.

Step 4: Run Your First Risk Assessments Using Existing Frameworks

Don’t wait for the perfect assessment template. Use NIST AI RMF or ISO 42001 Annex A as your starting reference and begin assessing your high-risk systems immediately. Your first assessments will be rough. That’s expected and acceptable.

Conduct assessments with basic documentation or simple spreadsheets if that’s what you have. Resist the urge to search for a magic governance standard or framework that makes everything clear. You won’t find it. The best approach is to borrow concepts from existing standards, run a few assessments, then write your Standard Operating Procedures based on what actually worked in practice.

Document what went well during your first assessments and what was confusing or time-consuming. Use those observations to refine your process. Write clear SOPs after you’ve done a few assessments, not before. Your procedures should reflect what you learned from real assessment work rather than theoretical assumptions about how governance should work.

Step 5: Set Up Continuous Monitoring and Define Your Review Cadence

AI systems in production need real-time monitoring for performance drift, bias, and fairness. Define key performance indicators for each model before it goes to production. These KPIs should include accuracy metrics, fairness metrics, and business-relevant measurements depending on what the system actually does.

Set up monitoring dashboards that alert your team when KPIs move outside acceptable ranges. These alerts trigger investigation and intervention before a problem affects your customers or violates your governance policies.

Establish an internal audit cycle that reviews governance practices at least annually if you’re pursuing ISO 42001 alignment. Schedule quarterly reviews at the executive level where senior management receives updates on AI system performance, governance findings, and corrective actions taken. This executive visibility keeps governance from becoming just a compliance checkbox.

Your governance program evolves over time. What starts as manual spreadsheets and simple processes gradually becomes more sophisticated as you invest in tools and develop deeper expertise. The key is to start now with whatever resources you have rather than waiting for perfect conditions that may never arrive.

Frequently Asked Questions About AI Governance Tools

What is the difference between AI governance tools and MLOps tools?

These two categories serve different purposes even though they often get confused. MLOps tools manage the technical lifecycle of building, training, deploying, and versioning machine learning models. Think of MLOps as the engineering toolkit that data scientists use to make models work properly.

AI governance tools enforce policy, ethics, compliance, and accountability across that entire machine learning lifecycle. While MLOps handles the technical execution, governance handles the oversight. MLOps is actually one component of what comprehensive governance platforms oversee. You need both, but they solve different problems.

Many enterprise platforms now combine elements of both MLOps and governance functionality. This integration makes sense because a governance tool needs visibility into how models are being built and deployed. However, the core distinction remains: MLOps focuses on making systems work. Governance focuses on making sure systems work responsibly and in compliance with policies.

Where do you start if your organization has no AI governance in place?

Starting from zero feels overwhelming, but the path forward is straightforward. Begin by creating an AI inventory to understand what systems already exist within your organization, including shadow AI systems nobody documented.

Once you know what systems you have, triage them by risk level. This step prevents you from wasting governance resources on low-risk systems while ignoring high-risk ones. Focus your initial governance work on high-risk systems that could cause the most damage if they fail or behave unfairly.

Form a governance committee with clear ownership across Legal, Compliance, IT, Privacy, and relevant business units. Don’t wait for perfect documentation or frameworks. Run your first risk assessments using NIST AI RMF or ISO 42001 as starting references, even if your assessments feel rough initially.

Get a few assessments completed before trying to perfect your documentation. Write your Standard Operating Procedures based on what you actually learned from real assessment work rather than theoretical assumptions about how governance should function. This action-over-perfection approach prevents the paralysis that stops many governance programs before they start.

What is shadow AI and how do you find it?

Shadow AI refers to any AI system operating within your organization that was never officially approved or documented. These systems exist in the background, often unknown to your central governance team, creating compliance and risk exposure you can’t see or manage.

To find shadow AI, start by requesting your IT department’s software approval list and looking for entries containing machine learning packages or analytics platforms. Consult with your privacy team because they conduct Data Protection Impact Assessments when projects handle sensitive data, and these assessments often identify AI systems that governance teams miss.

Search your GitHub repositories for machine learning libraries like TensorFlow, PyTorch, and scikit-learn. These library imports reveal development work that may have graduated to production without formal governance review. Finally, audit embedded AI in commercial tools your organization uses daily. Slack includes AI powered message search and workflow automation. Google Workspace has AI-powered writing suggestions and smart replies. Microsoft 365 has Copilot integrated throughout Word, Excel, and Outlook. All these tools process your organizational data through AI systems you probably didn’t formally approve.

What is the difference between AI governance and AI security?

These terms sound similar but address completely different risk categories. AI governance handles self-inflicted risks created by your own choices. This includes deploying models trained on biased data, violating your own policies, failing to document how models work, or not catching performance problems until they affect customers.

AI security addresses externally inflicted risks where attackers try to manipulate your systems. This includes prompt injection attacks where someone tricks a language model into ignoring its instructions, data poisoning where attackers corrupt training data, and unauthorized access attempts. These are security problems, not governance problems.

Different leaders own these responsibilities. Your Chief Risk Officer oversees AI governance. Your Chief Information Security Officer oversees AI security. Both functions matter and they need to work together as part of a layered architecture, but they require different expertise and different tools to manage effectively.

Do AI governance tools detect bias automatically?

The best AI governance platforms include continuous bias monitoring that watches model outputs in production and flags statistical deviations across protected demographic groups. However, automated detection is only as reliable as the fairness metrics you configured during setup.

Relying entirely on automated bias detection is a mistake. Before any model goes to production, you should conduct specific unfairness testing that intentionally tries to break the model in ways that would reveal bias. This targeted testing often catches problems that statistical monitoring would miss initially.

Bias detection works best when you combine pre-deployment testing with continuous monitoring. The testing catches obvious problems before they reach customers. The monitoring catches bias that emerges over time as the model encounters new data in the real world.

What does ISO 42001 require for AI governance?

ISO 42001 is the international standard for AI Management Systems, and certification requires demonstrating compliance across 18 specific milestones in four phases. The first phase is project initiation where you secure leadership commitment and define scope. The second phase involves strategy and risk assessment where you identify which AI systems you have and what risks they present.

The third phase is implementation and training where you establish governance processes and teach teams how to use them. The fourth and final phase is operation and continuous improvement where you monitor systems and refine your governance based on what you learn.

During implementation, you evaluate which controls from the ISO 42001 Annex A apply to your organization. The standard includes 38 possible controls, but you select which ones matter based on your specific risk profile and stakeholder requirements. You then complete a Statement of Applicability documenting which controls you implemented and why others don’t apply to your situation. This document becomes central to your certification audit.

What is the most important capability to look for in an AI governance platform?

A complete and accurate AI inventory with vendor-agnostic model cataloging is the foundation everything else builds on. You cannot enforce policy across systems you haven’t discovered. You cannot detect bias in models you don’t know exist. You cannot produce audit documentation for systems you haven’t registered.

Every other governance capability depends on inventory working correctly. Bias monitoring needs to know which models to monitor. Policy enforcement needs to know which systems exist. Compliance reporting needs to track systems across your documentation. If your inventory is incomplete or inaccurate, your entire governance program has blind spots.

Look for platforms that discover AI systems regardless of where they were built. Your models might run on Amazon SageMaker, Microsoft Azure ML, Google Vertex AI, or internal infrastructure. A truly useful governance tool sees all of them equally. This vendor-agnostic approach prevents you from managing governance across multiple fragmented systems.

Can small organizations afford proper AI governance tools?

Absolutely. You don’t need expensive enterprise platforms to start governing AI responsibly. Open-source options like Guardrails AI provide basic policy enforcement and output validation at zero cost. If you have Python expertise on your team, open-source frameworks let you implement governance controls without licensing fees.