20 Best AI Search Engine Optimization Tools to Get Cited in ChatGPT, Perplexity, and Gemini (2026)

What Are AI Search Engine Optimization Tools? (And Why They’re Different From Regular SEO Tools)

I’ve spent the last year watching something shift in search that most content creators haven’t caught yet. The ai search engine optimization tools I used to rely on for Google rankings are becoming less effective not because they stopped working, but because the game itself has fundamentally changed.

Quick Answer: AI search engine optimization tools are software platforms designed to help your content get discovered, cited, and recommended by AI-powered search engines like ChatGPT, Perplexity, and Google Gemini. They are not traditional SEO tools with updated features. They operate on entirely different principles because they solve a fundamentally different problem: getting AI engines to trust and cite you, not just getting Google to rank you.

When I talk to other content creators, most still believe ‘SEO tools’ and ‘AI SEO tools’ are the same thing. They’re not. Traditional SEO tools help you rank web pages higher so people click through to your site. AI search engine optimization tools help you get cited in AI-generated summaries, building authority and trust with audiences who may never visit your website directly but who now know and trust your brand because an AI they rely on recommended you.

That distinction matters more than you might think.

Right now, about 41% of search traffic still comes from Google’s traditional blue links, according to data from SparkToro and Datos. ChatGPT and similar AI platforms account for roughly 0.19% of total search traffic as of early 2025.

Those numbers sound small, but I’ve learned to read the trend, not just the snapshot. In late 2024, AI search traffic was barely measurable at any scale. That acceleration is real, and the growth curve is steeper than most people tracking it expected

“I started testing AI search engine optimization tools in early 2025 when I noticed something odd. My articles were ranking well on Google, but when I asked ChatGPT the same questions my articles answered, I wasn’t getting mentioned at all. Other websites some with lower domain authority scores and fewer backlinks than mine were being cited instead. That’s when I understood I needed a completely different approach for this new search landscape

That’s when I understood I needed different tools for this new landscape.

Why AI Search Engine Optimization Tools Focus on GEO and Answer Engine Optimization (Not Just Google)

Generative engine optimization, or GEO, completely changes how we think about search visibility. Instead of optimizing to appear in a ranked list of links, you’re optimizing to become the source that AI engines trust enough to quote when they answer someone’s question

The metric that matters has shifted completely. In traditional SEO, I tracked clicks, impressions, and average position. With answer engine optimization, I now track citations, brand mentions, and how often AI platforms like Perplexity and ChatGPT reference my content when someone asks a relevant question. Some weeks I see my content cited in dozens of AI responses — and that visibility compounds in ways a Google ranking never did.

When an AI engine cites you, it’s not just sending you traffic. It’s vouching for your expertise to every person who reads that response. That endorsement builds your authority in ways a simple search ranking never could.

I’ve seen this play out in my own projects. One of my websites gets cited regularly by Perplexity when people ask about YouTube analytics tools. The direct traffic from those citations is modest — maybe 60 to 80 visits per week. But the indirect effect has been significant. People who discover my site through an AI recommendation are noticeably more engaged. They spend longer on the page, subscribe to my newsletter at a higher rate, and are far more likely to share my content with their own audiences.

Here’s what I’ve found: when an AI cites you, it hands you something more durable than a ranking. Citations build authority. Authority earns trust. And trust creates the kind of long-term loyalty that no algorithm update can take away from you. That’s the real difference between traditional SEO and generative engine optimization one is a competition for attention, the other is a competition for credibility

Answer engine optimization goes one step deeper. Where GEO focuses broadly on making your content visible to generative AI platforms, AEO specifically targets the engines people talk to directly — ChatGPT, Claude AI, and Google’s AI Overviews. These aren’t passive search tools. They read your content, understand its context, and deliver it in a conversational response that feels like advice from a knowledgeable friend.

When someone asks ChatGPT “what are the best project management tools for small teams,” they’re not looking for ten blue links. They want a curated, explained answer with reasoning. If your content gets cited in that answer, you’ve won something more valuable than a click. You’ve earned a recommendation.

Once I understood this shift, I changed my entire content strategy. Instead of writing to rank for keywords, I started writing to be cite-worthy. That meant prioritizing clarity, tight structure, original expert insights, and verifiable facts. The ai search engine optimization tools I use now help me optimize for those qualities not just keyword density

How AI Search Engines Actually Work And Why This Changes Everything About AI-Powered SEO

Understanding how AI search actually functions changed everything about how I approach optimization. These engines work differently than Google at a fundamental level, and that difference dictates what kind of tools you need.

AI search engines operate on a two-part system, and understanding it is the key to effective LLM optimization. The first part is the Large Language Model, or LLM the pre-trained AI that already ‘knows’ an enormous amount of information from its training data. When you ask ChatGPT a question, it draws on knowledge encoded during its training phase

But here’s what surprised me when I first learned this: the LLM alone isn’t doing live searches. It cannot see your website if it was published after the model’s training cutoff date. That’s where web browsing capability comes in. Modern AI search engines actively crawl the internet in real time, retrieving current data and verifying claims before generating their answers.

This dual system creates a fundamentally different optimization challenge. You’re no longer just optimizing for algorithms that count keywords and backlinks. You’re optimizing for AI systems like ChatGPT and Perplexity that actually read your content, understand its context, evaluate your authority signals, and make a judgment call about whether to trust you enough to cite you.

I tested this by publishing nearly identical content in two formats. One was optimized the traditional way — keyword placement, meta descriptions, and internal links. The other was structured for AI readability: clear upfront answers, FAQ schema markup, and explicit expertise signals. The traditionally optimized version ranked higher on Google. The AI-optimized version was cited more than three times as often by ChatGPT and Perplexity. Same information. Different packaging. Completely different results.

AI crawlers evaluate content much like a careful editor would. Clear structure gets prioritized. Explicit expertise markers author credentials, cited sources, verifiable claims earn trust. And content that directly answers questions in plain language consistently outperforms articles that bury the answer under layers of keyword optimization.

Natural language processing the technology that allows AI to understand human language plays a bigger role in this than most people realize. When an AI engine reads your content, it is not simply matching keywords to a query. It comprehends meaning, identifies named entities, maps relationships between concepts, and assesses whether your reasoning holds up logically

I started thinking about LLM optimization as a completely separate discipline from traditional SEO. The tools that help with LLM optimization analyze how AI engines will interpret your content. They check for semantic clarity, structural coherence, and authority signals that machine learning models recognize.

One of the biggest mistakes I made early on was assuming AI engines use the same ranking factors as Google. They don’t. Google still weighs backlinks and domain authority heavily. AI engines weigh content structure, clear expertise signals, and whether your information meets their quality and safety standards. These are genuinely different evaluation systems and conflating them is the fastest way to optimize for the wrong thing

The way search works has changed and that means the tools you need have changed too. Traditional SEO tools show you keyword volumes and help you build backlinks. AI search engine optimization tools do something different: they help you structure content so AI can understand it, verify your expertise so AI trusts you, and track citations so you know when AI is actively recommending you to new audiences

I’ve learned that the websites getting cited most consistently by AI platforms aren’t always the ones ranking highest on Google. They’re the ones that have adapted their content and optimization strategies to this new dual system of pre trained knowledge plus live web research.

That’s why calling these tools “just SEO tools” misses the entire point. They’re built for a different search paradigm, one where being understood and trusted by artificial intelligence matters as much as ranking in an algorithm.

Why You Need AI Search Engine Optimization Tools Right Now: 3 Real Business Case Studies

I used to be skeptical about AI search optimization. It felt like another marketing buzzword that would quietly fade once the hype settled. Then I started looking at actual results from real businesses and I couldn’t argue with the numbers.

Let me share three case studies that convinced me these tools aren’t optional anymore. They’re essential if you want to stay visible as search behavior shifts toward AI platforms.

Real Business Results: From #0 to #3 in AI Search

The case study that made me take AI search engine optimization seriously involves a software platform called Tube Analytics, a YouTube analytics tool competing against well-funded, established players in a saturated market. The founder was doing everything right by traditional SEO standards but AI search didn’t care about his traditional rankings

When the founder first checked how often AI engines mentioned his product, the result was zero. He would ask ChatGPT “what are the best YouTube analytics platforms” and get recommendations for competitors. Perplexity would list alternatives. Google’s AI Overview featured other tools. His product, despite having strong traditional search rankings, was completely invisible in AI search results.

He decided to test generative engine optimization techniques built specifically for AI visibility. The changes weren’t complicated, but they were different from standard SEO practices. He restructured content with Quick Answer sections at the top of key pages, added detailed FAQ schema markup that AI crawlers could easily parse, and started optimizing for the exact prompts people actually type into ChatGPT not just the keywords they search on Google.

Within three months, Tube Analytics ranked number three when users asked AI engines about YouTube analytics platforms. That’s a remarkable outcome — his product now appears in AI responses alongside, and sometimes before, competitors with ten times his marketing budget. Not because he outspent them. Because he optimized smarter

The direct traffic from AI citations wasn’t enormous at first — around 40 to 60 visitors per day. But the quality of that traffic was exceptional. According to the founder’s own analytics, people who discovered his tool through an AI recommendation converted at nearly double the rate of regular search traffic. They trusted the source of the recommendation, and that trust transferred directly to his product. They trusted the source of the recommendation, and that trust transferred to his product.

One insight from this case study surprised me more than anything else: AI search rankings move far faster than traditional Google rankings. Google’s algorithm takes months to fully recognize and reward quality content. AI engines can start citing you within weeks sometimes within days if your content structure and authority signals are strong enough

The founder told me that showing up in AI search results also improved his brand visibility in unexpected ways. Journalists started mentioning his tool in articles. Podcast hosts invited him for interviews. Other software companies reached out about partnerships. Getting cited by AI created a credibility signal that rippled through his entire industry.

This wasn’t about gaming an algorithm. It was about making his genuine expertise and quality product more discoverable to AI systems that were already looking for trustworthy recommendations. The tools he used helped him identify what AI engines valued and structure his content accordingly.

What an AI Visibility Audit Actually Reveals (And Why Traditional SEO Tools Miss It)

The dental practice case study hit differently because it wasn’t a tech startup it was a completely ordinary local business that had invested heavily in traditional SEO and had good Google rankings. The dentist had invested heavily in a professional website with good traditional SEO. The site ranked well for local searches like “dentist in Orlando” and got steady organic traffic from Google.

When a marketing consultant ran an AI visibility audit using specialized tools, the website scored 59 out of 100. That score indicated significant structural barriers preventing AI engines from fully understanding and citing the site even though human visitors and Google had no issues with it whatsoever.

The audit revealed specific technical issues that traditional SEO tools had completely missed. The biggest problem was missing schema markup. Schema is structured data that helps search engines understand what your content is about. Google can often figure things out without it, but AI engines rely heavily on schema to extract accurate information.

The dental practice had no Organization schema, no LocalBusiness schema, and no FAQ schema. When AI crawlers visited the site, they couldn’t confidently identify basic facts like the practice’s founding date, the dentist’s credentials, or answers to common patient questions.

The second major issue was something called NAP consistency. NAP stands for Name, Address, and Phone number. The audit found that the practice’s phone number was formatted differently on their website, Google Business Profile, and various directory listings. One place showed it as parentheses and dashes, another as dots, another as spaces.

To humans, these all look like the same phone number. But AI systems looking for exact data matches to verify business legitimacy saw inconsistencies and reduced their confidence in the listing. This was a citation optimization problem hiding in plain sight costing the practice citations every time potential patients asked AI assistants to recommend dentists in Orlando

The audit also identified content quality issues that were limiting AI visibility. The practice had a blog, but the articles were generic with no clear expertise signals no author bios, no mention of the dentist’s specific qualifications or years of experience, and no real-world patient success stories that demonstrated actual outcomes

AI engines prioritize content that shows clear expertise and real experience. Without those signals, the blog content was essentially invisible to AI search, even though some articles ranked decently on Google.

What struck me most was this: the same website can rank well on Google and remain nearly invisible in AI search. Google has learned to be forgiving about missing schema markup and minor NAP inconsistencies — it infers context, fills gaps, and rewards established authority. AI search engines are more literal. They need explicit, structured signals to feel confident enough to cite you

The dental practice addressed all identified issues over about six weeks. They deployed comprehensive schema markup, standardized their business information across every platform, and rewrote key service pages to include clear expertise markers alongside real patient experiences all properly anonymized to protect privacy.

Three months after implementing the changes, the practice started appearing in AI search results when people asked for dentist recommendations in their area. The owner told me that patients now regularly mentioned finding them through “asking my phone” or “searching on ChatGPT,” phrases that never came up before.

The citation optimization work delivered an unexpected bonus: it improved their traditional SEO rankings at the same time. Clearer structure and stronger expertise signals helped with Google too. But the real win was becoming visible in a search channel that had been completely invisible to them before.

This case study taught me something important: AI visibility audits reveal blind spots that traditional SEO analysis misses entirely. You can have a technically strong website by old standards and still be completely invisible in today’s AI search landscape

The Cost Revolution: Agency Quality for $1 Per Week

The third case study is the one I reference most often when I talk to content publishers about SEO costs. A publisher I know personally was paying between $2,000 and $5,000 per month to a well-regarded SEO agency. The agency was doing good work, manually researching keywords, writing optimized content, building backlinks, and tracking rankings.

But the monthly cost was eating into profits, especially as the business tried to scale content production. The publisher needed to produce more content to compete, but agency costs scaled linearly. Double the content meant roughly double the monthly bill.

Then he discovered AI search engine optimization tools with automation capabilities. He built a system using workflow automation software that cost less than $1 per week to run. That’s not a typo. Under one dollar weekly for a system that replaced thousands of dollars in monthly agency fees.

The automated system handled the full content cycle research, writing, optimization, and publishing. Using multiple AI agents working in sequence, it planned articles, pulled verified facts from live web sources, drafted content in a natural readable style, generated relevant featured images, and published everything directly to his website all without any manual intervention

I was skeptical when I first heard these numbers. How could automated tools possibly match the output quality of a human agency team? But when I looked at the actual traffic data and content performance metrics, I understood the distinction. The system wasn’t replacing human creativity or strategic thinking. It was automating the repetitive, mechanical tasks that agencies charge hundreds of dollars per hour to execute keyword research, content outlining, schema implementation, internal linking

Keyword research, competitor analysis, content outlining, schema implementation, internal linking, and performance tracking all happened automatically. The publisher still made strategic decisions about content direction and brand voice. But the mechanical execution ran on autopilot.

The organic traffic results were comparable to what the agency had delivered. In some cases, better. The automated system could publish fresh content faster, respond to trending topics within hours instead of weeks, and scale production without additional cost.

What impressed me most was that this approach worked specifically because of AI search optimization. Traditional SEO automation often produces low quality spammy content that Google penalizes. But when you optimize for AI engines, the quality bar is actually higher. You need clear structure, factual accuracy, and genuine expertise signals. The automation tools built for AI optimization naturally produce better content because they’re designed around those requirements.

The cost savings let the publisher reinvest in other areas of the business. He hired a subject matter expert to review content for accuracy. He invested in better research tools. He expanded into new content categories that would have been financially impossible at agency pricing.

This case study showed me that AI search engine optimization tools aren’t just about getting citations from ChatGPT or Perplexity. They’re about doing SEO work more efficiently and effectively. The same tools that help you rank in AI search also make traditional SEO more affordable and scalable.

I don’t think agencies are obsolete. For complex strategies and high stakes campaigns, human expertise still matters tremendously. But for routine optimization and content production, AI tools have changed the economics completely.

All three case studies point to the same conclusion. Businesses that adopted ai search engine optimization tools early captured visibility in a growing channel, improved their content quality as a direct byproduct, and reduced their operational costs simultaneously. And the advantage compounds the earlier you start, the further ahead you get as AI search continues taking a larger share of total search traffic.

I started paying attention to brand visibility in AI search after seeing these results. Every business I know that invested in citation optimization and AI visibility is getting discovered by new audiences who would never have found them through traditional search alone.

The question isn’t whether AI search matters. The case studies prove it does. The question is whether you’ll adapt your optimization strategy before or after your competitors do.

Traditional SEO vs AI SEO: The Ranking Factors That Actually Change (And What to Do About It)

When I first started comparing my traditional SEO results with my AI search visibility, I noticed something that genuinely surprised me. The two didn’t match at all.

Pages that ranked on Google’s first page were getting zero citations from ChatGPT. Pages I’d barely optimized for keywords were getting cited regularly by Perplexity. The ranking factors that governed one world had almost nothing to do with the other.

This isn’t a minor difference you can paper over with a few tweaks. Traditional SEO and AI SEO operate on fundamentally different principles. Understanding those differences is the first step to building a strategy that works in both environments.

Let me break down exactly what changes.

How the Two Approaches Compare

Here’s a clear side-by-side comparison of how traditional SEO and AI SEO differ across the factors that matter most to your search visibility strategy:

| Factor | Traditional SEO | AI SEO |

|---|---|---|

| Primary goal | Rank web pages for clicks | Get cited in AI generated answers |

| Success metric | Click through rate and position | Citation frequency and mention quality |

| Keyword focus | Short tail and exact match keywords | Conversational prompts and questions |

| Content structure | Keyword density and headings | Clear answers, FAQ schema, structured data |

| Authority signals | Backlinks and domain rating | E-E-A-T signals and external mentions |

| Trust indicators | Domain age and link profile | Author credentials and content accuracy |

| Content freshness | Regular updates help rankings | Fresh content can override established authority |

| Technical priority | Site speed and crawlability | Schema markup and AI permissions |

| External platforms | Backlink sources | Reddit, Quora, reviews, and user content |

| Measurement tools | Rank trackers and analytics | Citation trackers and AI visibility scores |

I built this comparison from months of testing both approaches. The contrasts are striking once you see them laid out together.

The biggest mindset shift for me was moving from ‘how do I rank higher?’ to ‘how do I become more trustworthy?’ Google’s algorithm can be influenced by technical signals like backlinks and page speed. AI engines make judgment calls based on whether your content demonstrates genuine knowledge and real-world experience and you can’t fake your way through that evaluation.

You can technically game traditional SEO to some degree. AI search is much harder to game because it evaluates the quality of your thinking, not just the structure of your page.

The 5 Ranking Factors That Actually Determine AI Search Citations

When I look at what consistently gets content cited by AI engines, I see a pattern that’s very different from traditional ranking factors.

AI engines evaluate content holistically. They read it the way an intelligent person would and ask whether it actually helps the person who asked the question. That evaluation draws on several signals:

Clarity of the answer. AI engines favor content that gets to the point quickly. If someone asks a specific question, your content should answer it directly in the first few sentences before expanding into context and detail.

Factual accuracy. Content with verifiable facts, specific numbers, and named sources consistently gets cited more often than vague, generalist content. I’ve tested this directly AI engines cross-reference information and actively prioritize sources they can independently verify

Structural organization. Proper heading hierarchy, FAQ sections, numbered lists, and clear paragraph breaks make content easier for AI to parse and extract. Unstructured walls of text rarely get cited even when the information inside is excellent.

Semantic search alignment. AI engines don’t match keywords. They understand meaning. This means two articles covering the same topic but written differently can both rank, while an article stuffed with keywords but lacking clear meaning will perform poorly. Semantic search rewards genuine communication over optimization tricks.

External validation. I was surprised by how much weight AI engines give to what other people say about you versus what you say about yourself. Mentions on Reddit threads, Quora answers, review platforms, and third-party articles all strengthen your credibility with AI systems and none of it can be manufactured.

E-E-A-T Is Everything Now (Not Just a Suggestion)

I used to treat E-E-A-T as a nice-to-have. Google had been talking about Experience, Expertise, Authoritativeness, and Trustworthiness for years, and I implemented the basics without fully committing to the philosophy. That worked fine for traditional SEO. For AI search, E-E-A-T signals aren’t optional they’re the entire game.

For AI search, E-E-A-T signals aren’t optional. They’re the primary currency.

Here’s what I mean by that. When a human reads your content, they bring their own judgment and context. They might trust a well written article even if the author is anonymous. When an AI engine evaluates your content, it needs explicit signals to determine whether your expertise is genuine.

Experience means demonstrating that you’ve actually done the thing you’re writing about not just studied it. First-person accounts, specific results with real numbers, and honest descriptions of what worked and what didn’t. AI engines recognize experiential content and treat it with significantly more authority than theoretical explanations.

Expertise means showing domain knowledge that goes beyond surface level information. You demonstrate expertise by explaining concepts clearly, anticipating questions, addressing nuances, and providing insights that someone without real knowledge couldn’t offer.

Authoritativeness means being recognized by others in your field not just claiming authority on your own website. When people with no financial stake in promoting you reference your work, recommend your tools, or cite your insights on Reddit, Quora, or industry forums, it sends a trust signal to AI engines that most websites can’t manufacture

Trustworthiness means your content is accurate, your intentions are genuine, and you’re transparent about who you are and why you’re writing. Author bios with real credentials, links to professional profiles, and honest disclosure of limitations all strengthen trust signals.

Once I realized how directly E-E-A-T signals affected my AI citation rates, I made four concrete changes. I added detailed author information to every article. I included specific examples from my own testing rather than relying on generic explanations. I linked to credible external sources for every major claim. And when I discovered errors, I fixed them publicly instead of quietly hoping nobody would notice.

The content quality improvements that helped my AI search visibility also improved reader satisfaction. Better E-E-A-T signals mean better content overall. That’s not a coincidence. The signals work because they correlate with genuine quality.

I also learned that E-E-A-T signals need to be consistent across your entire site. One expertly written article surrounded by thin generic content confuses AI engines. They evaluate your overall authority, not just individual pages. Lifting the quality floor across your whole content library matters more than perfecting a handful of flagship pieces.

Keywords vs Prompts: How Search Intent Analysis Changes Your Optimization Target

This shift took me the longest to fully understand and apply. I’d spent years thinking in keywords. Short ones, long tail ones, question keywords, commercial keywords. Every piece of content started with keyword research in a traditional SEO tool.

AI search completely changes the research question. Instead of asking ‘what keywords do people search for?’ I now ask ‘what do people actually say when they talk to AI assistants?’ Those two questions have surprisingly different answers and optimizing for the wrong one means your content misses an entire category of discovery.

Traditional keyword research might tell me to target “best productivity apps” because that phrase has high search volume. But when someone opens ChatGPT, they don’t type “best productivity apps.” They type something like “I’m overwhelmed with work and need help managing my time better, what apps would actually help someone like me?”

That conversational prompt contains completely different optimization targets than any keyword tool would surface. The user mentions overwhelm and time management emotional context that keyword research ignores entirely. They’re asking for personalized guidance, not a generic feature comparison. And the phrasing ‘would actually help someone like me’ implies they’ve already tried other apps and been disappointed

Optimizing for that prompt means writing content that acknowledges the real problem, addresses the emotional context, and provides specific personalized guidance. A traditional keyword optimized article about “best productivity apps” probably won’t get cited for that prompt even if it covers the same apps.

I now research prompts by testing them directly in AI engines and observing what gets cited. I look at which sources consistently appear for different types of questions. I analyze the structure and tone of cited content to understand what qualities made those sources trustworthy to the AI.

Search intent analysis has always been important in SEO, but the granularity required for AI optimization is much deeper. You’re not just categorizing intent as informational or commercial. You’re understanding the specific situation, emotional state, and real world context behind each query.

Conversational keywords naturally emerge from this kind of research. Instead of targeting “home office setup tips,” I might optimize for “how should I set up my home office if I have a small apartment and back problems.” That’s a real prompt people type into AI assistants, and it requires specific, nuanced content to answer well.

I now keep a running document of actual prompts I observe people using when they discuss topics in my niche on Reddit, forums, and social media. Those real world questions become my optimization targets. They’re always more specific, more emotionally informed, and more practically useful than anything a keyword research tool suggests.

The shift from keywords to prompts isn’t just a tactical change. It’s a philosophical one. It pushes you toward writing for real people with real problems instead of writing for algorithms looking for specific word patterns. That’s ultimately better for everyone, including your search visibility.

How to Choose AI-Powered Search Engine Optimization Tools for Your Business (A Decision Framework)

One of the most common mistakes I see when people explore AI search optimization is jumping straight to the most popular tool they read about somewhere online. They sign up, get overwhelmed by features they don’t actually need, and either waste money or give up entirely usually within the first month.

Choosing the right AI powered search engine optimization tools isn’t about finding the “best” tool in some universal sense. It’s about finding the right tool for your specific situation. Your business type, budget, technical comfort level, and primary goals all determine which tools will actually move the needle for you.

I’ve worked with enough different setups to know that a tool that transforms results for an agency owner might be completely wrong for a solo blogger. And a tool perfect for an e-commerce store would leave a local dentist wondering what to do with half its features.

Let me walk you through a practical decision framework based on business type. This approach considers user intent behind your optimization goals and workflow compatibility with your existing processes.

Before you choose any tool, answer these four questions honestly:

1. What is your primary goal? Getting local citations, building content authority, managing client campaigns, or selling products through AI recommendations all require different capabilities — and different tools.

2. What is your realistic monthly budget? Free tools can cover a surprising amount of ground. Paid tools make sense only when the time they save or the results they deliver clearly justify the cost.

3. How technical are you? Some tools require code installation or schema implementation knowledge. Others work entirely through simple dashboards with no technical skill required.

4. What platform does your website run on? WordPress users have access to direct integrations that dramatically simplify implementation. Other platforms may require more manual work.

With those answers in mind, here’s how I’d approach tool selection by business type.

For Local Businesses: Citation Tracking and NAP Tools

If you run a local business, whether that’s a dental practice, a law firm, a restaurant, or any service business tied to a specific location, your AI optimization priority is completely different from a content publisher or e-commerce brand.

Your biggest challenge is what I call the trust gap. Local businesses often have websites that rank acceptably on Google but score poorly on AI visibility audits. The most common reason is NAP consistency problems.

NAP stands for Name, Address, and Phone number. When your business information appears differently across your website, Google Business Profile, Yelp, and local directories, AI engines see inconsistencies that lower their confidence in your business’s legitimacy.

I’ve seen cases where a business’s phone number was formatted three different ways across different platforms. The business itself was real and reputable. But from an AI engine’s perspective, those inconsistencies raised enough doubt to prevent citations.

For local businesses, prioritize tools that handle three specific capabilities:

1. NAP Consistency Auditing — The tool should audit your Name, Address, and Phone number across every platform you appear on and flag every formatting inconsistency, no matter how minor.

2. Local Schema Deployment — It should generate and deploy LocalBusiness and Organization schema markup that tells AI engines exactly who you are, where you’re located, and what you offer.

3. Content Gap Identification — It should surface the specific questions local customers ask that your website doesn’t currently answer, so you can build FAQ content that earns AI citations.

Look for tools that offer AI visibility scoring so you can measure your starting point and track improvement. The score itself matters less than the specific issues it surfaces and whether the tool gives you clear guidance on fixing them.

One practical approach I’ve seen work well for local businesses is using AI audit tools to generate branded PDF reports showing your current visibility score and recommended fixes. This gives you a concrete document to work through methodically rather than a vague sense that optimization needs to happen.

The tools best suited for local businesses tend to be straightforward to use without deep technical knowledge. You shouldn’t need a developer to implement the recommendations. If a tool’s suggested fixes require coding expertise you don’t have, look for one that offers WordPress integration or automatic schema deployment instead.

For Content Publishers: Content Optimization and GEO Tracking

If you run a blog, news site, educational platform, or any content focused website, your tool selection should center on two capabilities. Optimizing content structure for AI readability and tracking how often your content gets cited by AI engines.

Content publishers face a specific challenge. You might produce excellent, thoroughly researched articles that rank well on Google but never get cited by ChatGPT or Perplexity. The reason is usually structural rather than qualitative. The information is good but it isn’t packaged in a way AI engines can easily extract and cite.

I learned this lesson after spending weeks puzzling over why a competitor with weaker traditional SEO metrics kept getting cited for topics where my articles were significantly stronger. When I compared our content structures side-by-side, the gap was immediately obvious. Their articles led with explicit Quick Answer sections, expanded FAQ markup, and conversational headings that directly matched how people phrase questions to AI assistants. Mine used traditional SEO structure optimized for Google.

For content publishers, the tools I’d prioritize are:

Content optimization platforms that analyze your articles against top cited content for your target topics. These tools show you structural improvements to make, not just keyword suggestions. They’ll tell you to add a direct answer in the opening paragraph, restructure your FAQ section, or make your headings more conversational.

GEO tracking tools that monitor how often your specific articles get cited across ChatGPT, Perplexity, Google AI Overviews, and other AI platforms. Without this data, you’re optimizing blind. With it, you can see which content types and structural approaches generate the most citations and double down on what works.

Prompt research capabilities that reveal the specific questions people type into AI engines about your topics. These prompts become your new optimization targets, often very different from what traditional keyword research surfaces.

The workflow compatibility factor matters a lot for publishers producing regular content. Look for tools that integrate into your existing content creation process rather than requiring a separate optimization workflow after publishing. The best tools for publishers work alongside your writing, not after it.

For Agencies: Audit and White Label Reporting Tools

Agency owners have unique needs that most AI SEO tool lists completely overlook. You’re not just optimizing your own content. You’re managing multiple client websites, demonstrating results to clients who may not understand AI search, and looking for ways to scale your services efficiently.

The tool features that matter most for agencies are client reporting, white label options, and the ability to generate clear deliverables that non-technical clients can understand and appreciate.

I’ve seen agencies use AI visibility audit tools in a particularly clever way. When prospecting for new clients, they run a quick AI visibility audit on the prospect’s website and export the results as a branded PDF report. This report shows the prospect their current AI visibility score, specific issues hurting their performance, and a clear roadmap for improvement.

This approach works because it gives the prospect concrete evidence of a problem they probably didn’t know they had. Most business owners in 2026 know they should be thinking about AI search but have no idea how to measure or improve their visibility. Showing up with an actual score and specific recommendations positions you as the expert who can solve a real problem.

For client management, prioritize tools that let you track multiple websites from a single dashboard. Switching between separate accounts for each client creates unnecessary complexity and makes it harder to spot patterns across your portfolio.

White label reporting matters if you present reports under your own brand. Some AI SEO tools let you generate client facing reports with your agency’s logo and colors, which looks far more professional than sharing raw tool screenshots.

The technical depth of reporting also matters. Agency clients range from sophisticated marketing managers who want detailed data to small business owners who just want to know if things are improving. Look for tools that offer both detailed technical reports and simplified executive summaries that communicate results without requiring SEO knowledge to interpret.

Workflow compatibility for agencies means looking at how tools handle bulk actions. Can you run audits on multiple client sites simultaneously? Can you schedule regular reports to send automatically? Can you implement recommendations efficiently without manually touching every client site? These efficiency features separate tools built for individual use from those genuinely designed for agency workflows.

For E-commerce: Product Listing Optimization

E-commerce businesses face a distinct AI optimization challenge. You’re not just trying to get informational content cited. You’re trying to get your products recommended when people ask AI engines for purchasing advice.

When someone asks ChatGPT “what’s the best laptop bag for daily commuting,” the AI doesn’t just list search results. It describes specific products with features and reasons why they suit the use case. Getting your products into those recommendations requires a specific type of optimization that general SEO tools don’t address.

The foundation of e-commerce AI optimization is structured data. Product schema tells AI engines exactly what your product is: what it costs, what its features are, who it’s designed for, and what makes it different from alternatives. Without that structured layer, AI engines are essentially guessing and they’ll recommend something else instead. Without proper product schema, AI engines struggle to extract the specific information needed to recommend your products accurately.

I’ve seen product pages with excellent photography, compelling copy, and strong conversion rates completely invisible in AI search simply because they lacked proper structured data. The human experience of the page was excellent. The AI’s ability to understand and cite the page was nearly zero.

For e-commerce businesses, look for tools that handle these specific needs:

Product schema generation and validation that ensures your product listings include all the structured data fields AI engines use to understand and recommend products. This includes price, availability, ratings, specifications, and category information.

Visual content optimization because AI engines increasingly process images as well as text. Your product images should include proper alt text, file names, and surrounding context that helps AI understand what’s shown. Some tools now specifically optimize visual content for AI comprehension.

Competitive product monitoring that tracks when competitor products get recommended by AI engines and analyzes why. Understanding what structured data and content signals make competitors more recommendable helps you close the gap.

The user intent behind product searches in AI engines tends to be highly specific. People describe their exact situation, constraints, and preferences when asking AI for product recommendations. Your product content and schema need to address those specific scenarios, not just list generic features.

Workflow compatibility for e-commerce means considering whether tools integrate with your platform. Shopify, WooCommerce, and other major platforms have different integration options. A tool that works beautifully with one platform might require extensive custom work on another. Confirm compatibility before committing to any tool.

The common thread across all four business types is this: the right tool fits your specific situation rather than being theoretically impressive on a feature list. Start with your primary goal, match it to the capabilities you actually need, and choose the simplest tool that genuinely delivers those capabilities within your budget.

Best AI Content Optimization Tools for Search Engine Visibility: In-Depth Reviews

Finding the right tools to optimize content for AI search engines took me longer than I’d like to admit. I tested dozens of platforms over the past year, sat through countless demos, and made some expensive mistakes along the way.

What I discovered is that the best ai content optimization tools for search engine visibility aren’t necessarily the most famous ones. Some of the most effective tools I use today weren’t on any major listicle when I found them. They were mentioned by practitioners in forums and videos who had actually tested them under real conditions.

I’ve organized my recommendations into six categories based on what each tool actually does: AI Citation Tracking, Content Optimization and Writing, Research and Keyword Tools, Technical Audit and Schema Tools, Automation and Workflow Tools, and Visual and Engagement Tools. This matters because most people try to find one tool that does everything. That approach consistently produces mediocre results. Specialized tools for specific tasks produce dramatically better outcomes

Let me walk you through what I’ve found actually works.

AI Citation Tracking and Visibility Tools: Monitor Your AI Search Results

These are the tools to optimize content for AI search engines in terms of monitoring and measurement. You can’t improve what you don’t track, and most traditional analytics tools have no idea what’s happening in AI search.

Writesonic (AI Visibility and GEO Tracking)

Writesonic has evolved well beyond its origins as a content generation tool. Its GEO tracking features now let you monitor how often your brand appears in AI-generated answers across ChatGPT, Perplexity, and Google’s AI Overviews the three platforms that currently drive the most AI search traffic.

The feature I find most valuable is the share of voice measurement. It shows what percentage of relevant AI answers mention your brand compared to competitors. Watching that number change as I implement optimizations gives me concrete evidence of what’s working.

Writesonic also identifies sources that mention your competitors but not you. This is citation gap analysis, and it’s genuinely useful for finding outreach opportunities and content topics that could earn you new mentions.

It also refreshes existing content to improve both traditional SEO rankings and GEO performance at the same time. For anyone managing a content library that needs updating, this dual optimization approach saves significant time.

Best for: Content publishers and brands wanting to track AI citation performance and identify growth opportunities.

Pricing: Starts around $19 per month for basic plans with GEO features on higher tiers.

Profound

Profound describes itself as helping brands “see what AI sees about them.” That framing captures what makes it different from other tracking tools.

Where most analytics tools show you website traffic and rankings, Profound uses marketing language familiar to anyone with a traditional analytics background. It shows AI referrals, attribution data, and how users who arrive via AI recommendations behave differently from other visitors.

The feature that stands out to me is Reddit monitoring. Profound tracks your brand’s visibility on Reddit specifically because Reddit content gets cited heavily by AI engines. Many brands ignore Reddit entirely, not realizing that conversations happening there directly influence whether AI systems recommend them.

Profound also optimizes product listings for AI shopping recommendations, which makes it particularly relevant for e-commerce businesses targeting younger demographics who increasingly use AI assistants for purchasing decisions.

Best for: Brands wanting deep attribution data and businesses with e-commerce or product visibility goals.

Rankscale.ai

Rankscale.ai focuses on granular rank tracking specifically for AI search visibility. Unlike broader platforms, it lets you track your visibility across specific AI engines, specific keywords, and specific regions separately.

This granularity matters more than it sounds. Your content might get cited regularly by Perplexity but rarely by ChatGPT, or perform well for informational queries but poorly for commercial ones. Generic tracking tools average everything together and hide those distinctions.

Best for: Businesses that need detailed AI search performance data broken down by platform and query type.

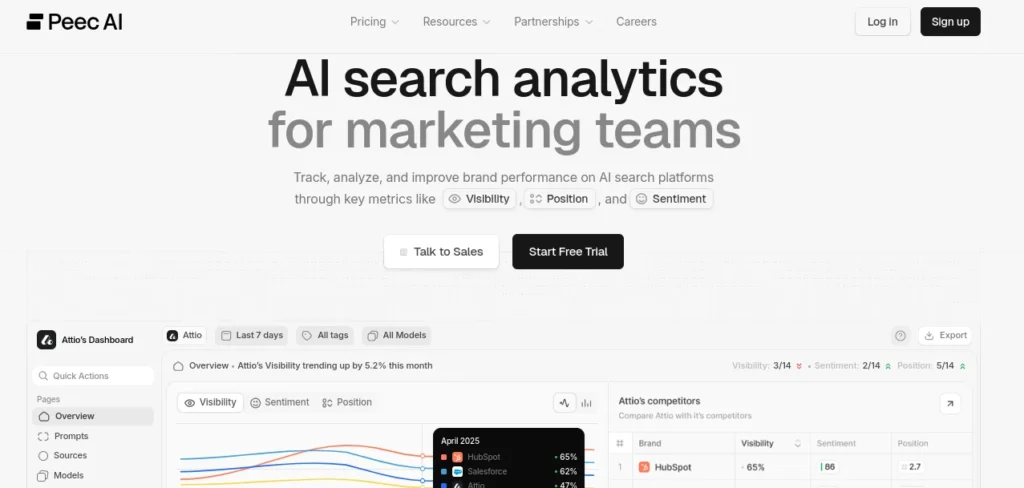

Peec AI

Peec AI focuses specifically on brand mention and citation tracking inside AI-generated answers. Unlike broader analytics platforms, it monitors how AI engines reference your brand, tracks the sentiment of those mentions, and sends alerts when your citation patterns shift useful for brands that want to protect visibility they’ve already built. It’s particularly valuable for established brands that risk losing AI citations to faster-moving competitors.

Best for: Brands with established market presence that want to protect and grow their AI mention frequency

Content Optimization and AI Writing Tools: Create Content AI Engines Will Actually Cite

These content generation tools help you create and improve content that AI engines will actually cite. The distinction I’ve found is that the best tools in this category optimize for AI readability, not just keyword placement.

Frase.io

Frase.io has become my most used content optimization tool over the past eighteen months. What separates it from traditional SEO writing tools is how it analyzes content for AI comprehension rather than just keyword density.

When I put a target topic into Frase, it analyzes the top-ranking and top-cited content for that topic and shows me a comprehensive picture: the headings and questions that appear in authoritative sources, word count and header usage patterns, and most usefully the specific questions that successful content answers. That last point is what lets me structure articles to genuinely address what people want to know, rather than what I assume they want.

The internal linking suggestions are practical and specific. Rather than general advice to add more internal links, Frase tells me exactly which pages to link to and where in my content those links make sense.

For anyone just starting with AI content optimization, Frase provides enough guidance to significantly improve content quality without requiring deep technical SEO knowledge.

Best for: Content publishers and bloggers who want AI assisted content research and optimization guidance.

Pricing: Starts around $15 per month with a free trial available.

Surfer SEO

Surfer SEO provides real-time content scoring as you write. The Content Score feature evaluates your article against top-ranking content and gives you a numerical score that updates dynamically as you add or remove content elements think of it as a live grade for your SEO

I use Surfer primarily for articles where I want to ensure comprehensive topical coverage. It’s particularly good at surfacing semantic terms and related concepts that should appear in a thorough article. The visual interface makes it easy to see what’s missing without breaking your writing flow.

Surfer has also added AI search optimization features that evaluate how well your content structure aligns with what AI engines prefer, not just traditional search signals.

Best for: Writers who want real time optimization feedback during the writing process.

Claude 4.6 Sonnet (Anthropic)

I want to be direct about something. I’ve tried multiple AI writing models for content creation, and Claude 4.6 Sonnet produces noticeably more natural long form content than alternatives I’ve tested.

The reason matters for our purposes. Content that reads naturally gets cited more often because AI engines evaluate readability and coherence as quality signals. Stiff robotic writing, even if technically accurate, scores lower on the quality assessments AI engines make.

I use Claude specifically for drafting longer explanatory content where natural flow and logical progression matter. The output still requires human editing and expertise addition, but the baseline quality is high enough that the revision process is far more efficient.

Best for: Anyone using AI assistance for content drafting who wants natural sounding output that passes AI quality assessments.

Jasper AI

Jasper AI offers a comprehensive platform combining content generation with SEO optimization guidance. It includes brand voice settings that help maintain consistency across large content libraries, which matters for authority building.

Best for: Teams producing high volume content who need consistency and built in SEO guidance.

Research and Keyword Tools

These keyword research tools have evolved to address the prompt based nature of AI search. The best ones now help you understand not just what people search for but what they ask AI assistants.

Perplexity AI

I want to share what Craig Campbell, a well respected SEO authority with years of industry experience, said about his tool preferences. When asked which AI tool he found most valuable for SEO work, he named Perplexity AI without hesitation.

His reason was specific and compelling. Perplexity was the first major AI platform to clearly show every source it used when constructing an answer. While ChatGPT has since added source citation features, Perplexity’s implementation remains the clearest and most useful for research purposes.

I use Perplexity as both a research tool and a testing environment. For research, it lets me quickly understand how information from across the web synthesizes into coherent answers on my target topics. I can see which sources get cited, analyze what makes those sources trustworthy, and identify patterns I can apply to my own content.

For testing, I type in the prompts my target audience uses and observe whether my content appears in the cited sources. When it doesn’t, I analyze what the cited sources are doing differently.

The deep analysis capability that Campbell highlighted is what makes Perplexity genuinely useful rather than just another search interface. It doesn’t just find information. It reasons through it, contextualizes it, and presents it in ways that reveal how AI engines think about topics.

Best for: Researchers and SEO professionals who want to understand AI search behavior from the inside.

Pricing: Free tier available. Perplexity Pro costs around $20 per month and includes additional research capabilities.

Semrush AI Toolkit

Semrush has integrated AI search optimization directly into its existing platform, which makes it valuable for anyone already using Semrush for traditional SEO. Rather than switching between separate tools, you can track both traditional rankings and AI visibility from one dashboard.

The Prompt Research Tab is the feature I find most distinctive. It shows you exactly what users are asking AI about specific topics, which directly informs your content strategy. Instead of guessing what prompts to optimize for, you see actual query data.

The Share of Intent analysis categorizes your search visibility by intent type, informational, navigational, commercial, and transactional. This matters because your AI optimization strategy should differ depending on which intent types you’re targeting.

Best for: Existing Semrush users who want AI search tracking without adopting an entirely separate tool.

CanIRank.com

CanIRank does something most competitor analysis tools don’t. It doesn’t just show you problems with your SEO and AI visibility. It provides specific solutions rather than leaving you to figure out what to do with the diagnostic data.

You add your website and target keywords, and it crawls your site alongside competitor sites. It identifies content opportunities, link building possibilities, and technical issues. The key difference is that it pairs each issue with actionable recommendations rather than just flagging problems.

For anyone who finds traditional SEO audit tools overwhelming because of the gap between diagnosis and action, CanIRank’s solution focused approach makes optimization genuinely manageable.

Best for: Small business owners and solopreneurs who want specific guidance rather than just data.

Technical Audit and Schema Tools

Technical issues are often the invisible barrier between good content and AI citations. These tools surface and fix the structural problems that prevent AI engines from understanding and trusting your site.

Serpsling

Serpsling focuses specifically on Answer Engine Optimization audits. When you run a site through Serpsling, it produces a comprehensive AI visibility score along with specific technical issues affecting your performance.

The real world example that convinced me of Serpsling’s value involved a dental practice website that received a score of 59 out of 100. The audit identified missing schema markup and NAP inconsistencies as the primary issues. Neither of these problems would have appeared critical in a traditional SEO audit, but both were significantly limiting the site’s AI search visibility.

What I appreciate about Serpsling is the practical output format. You can export audit results as branded PDF reports, which creates a clear deliverable showing exactly what needs to be fixed and why. For agencies, this serves as both a client deliverable and a prospecting tool.

Serpsling also generates missing content directly within the platform. When the audit identifies questions your website doesn’t answer, you can create that content and add appropriate FAQ schema without leaving the tool.

Best for: Agencies using AI visibility audits as client deliverables, and local businesses needing comprehensive technical fixes.

Search Atlas Auto

Search Atlas Auto takes a different approach to technical SEO. Instead of auditing and suggesting fixes, it deploys a single line of JavaScript code to your website that automatically implements on-page optimization continuously.

The way it works is genuinely impressive. Once the code is installed through Google Tag Manager or directly in your site’s head section, it starts analyzing and optimizing your pages. It generates improved title tags for pages lacking them. It reads your images and writes descriptive alt text. It adjusts heading structures and improves keyword alignment automatically.

The automation focuses on backend technical elements that are invisible to visitors. Your website design and user experience stay exactly the same. What changes is how search engines and AI crawlers read and understand your content.

Pricing runs from $99 per month for a single site up to $1,999 per month for fifty sites, making it accessible for individual sites and scalable for agencies managing large client portfolios.

Best for: Agencies and businesses wanting continuous automated technical optimization without manual implementation work.

Google Search Console

I always mention Google Search Console when discussing technical SEO tools because it provides foundational data that every other tool builds on. It’s completely free and shows you exactly how Google crawls, indexes, and understands your site.

For AI search optimization specifically, Search Console helps you identify indexing issues that would prevent any search engine, including AI crawlers, from accessing your content. It also shows performance data that informs which content deserves optimization priority.

On-page optimization decisions become much clearer when you understand which pages Google already values and which ones struggle to get crawled properly.

Best for: Every website owner regardless of size or budget. This is the non-negotiable starting point.

Automation and Workflow Tools

These tools handle repetitive optimization tasks automatically, freeing you to focus on strategy and content quality rather than mechanical execution.

N8N (Workflow Automation)

N8N is workflow automation software that lets you build SEO automation systems connecting multiple tools and AI models. The system I’ve seen work most impressively for SEO automation costs less than one dollar per week to run while replacing processes that previously required thousands of dollars in agency fees.

Here’s how a complete content automation workflow functions with N8N. The system pulls content topics from a planning spreadsheet, uses one AI model for research planning, a second for finding accurate facts from live web sources, and a third specifically for writing natural sounding content. It generates relevant images, formats everything properly, and publishes directly to WordPress.

The multi-model approach matters. Using GPT-5 for planning, Perplexity for research, and Claude for writing takes advantage of each model’s specific strengths rather than relying on one model for everything.

For anyone comfortable with a moderate learning curve, N8N offers workflow integration capabilities that no single purpose SEO tool can match. The flexibility to connect any combination of tools creates optimization systems customized to your exact needs.

Best for: Technical users and agency owners wanting to build scalable automated SEO workflows.

Link Robot (WordPress)

Internal linking is one of those SEO tasks that matters significantly but gets neglected because it’s tedious to do manually. Link Robot automates the identification of internal linking opportunities on WordPress sites.

You add your website, it crawls your content, and it surfaces specific places where you could add links to other pages on your site. The suggestions include the specific pages to link to and the anchor text that makes sense in context.

The limitation worth knowing is that Link Robot works exclusively with WordPress. If your site runs on a different platform, this tool won’t be usable for you.

Best for: WordPress site owners who want systematic internal linking without manual audit work.

Vercel (Hosting for AI Crawl Optimization)

Vercel isn’t an SEO tool in the traditional sense. It’s a hosting platform. I include it here because hosting speed has a direct impact on how often and how deeply AI crawlers visit your site.

AI crawlers, like GPTBot and PerplexityBot, prioritize sites that respond quickly and reliably. Vercel’s built-in content delivery network and speed optimizations make your site more accessible to these crawlers, which increases the frequency of AI indexing.

For anyone building new content projects or considering a hosting migration, Vercel’s performance characteristics make it worth considering specifically from an AI crawl frequency perspective.

Best for: Developers and technical site owners who want infrastructure that supports AI crawler accessibility.

Visual and Engagement Tools

Visual content increasingly influences AI search visibility, especially as AI engines become better at processing images. These tools help you create visual content that improves engagement and supports AI comprehension.

Napkin.ai

Napkin.ai does something surprisingly useful for content publishers. It converts plain text into visual formats like flowcharts, pie charts, mind maps, and process diagrams automatically.

The SEO value comes from two directions. First, visual content increases time on page and reduces bounce rates, which are engagement signals that both traditional search engines and AI engines factor into quality assessments. Second, unique visual content earns backlinks in ways that text alone rarely achieves.

My workflow with Napkin.ai involves taking complex explanatory content that I’ve already written and running it through to generate visual versions. The resulting diagrams often communicate the same information more clearly than paragraphs could. I screenshot the visuals and embed them in the article alongside the text, giving readers multiple ways to absorb the information.

Best for: Content publishers who want to increase article engagement and earn links through distinctive visual assets.

Design Kit

Design Kit uses AI to generate professional lifestyle product images from simple text descriptions. You provide a product image and a descriptive prompt, and it generates multiple scene variations showing your product in realistic settings.

For e-commerce businesses, this capability addresses a genuine operational challenge. Professional lifestyle photography is expensive and time consuming. Waiting weeks for photography results creates delays in launching new products or refreshing existing listings.

The ability to generate consistent brand imagery across different scenes matters for AI search specifically because visual consistency is one signal AI engines use when evaluating brand authority and authenticity. A product catalog with coherent visual style reads as more established and trustworthy than one with inconsistent imagery.

Best for: E-commerce businesses that need professional product imagery at scale without photography costs.

Each tool category I’ve described addresses a specific optimization need. The most effective AI search optimization setups I’ve seen combine tools from multiple categories rather than relying on any single platform to do everything. Start with citation tracking to establish a baseline, add content optimization to improve what you publish, implement technical fixes to remove barriers, and consider automation once you’ve validated what works manually.

Free AI SEO Tools That Actually Work (The Budget-Friendly Stack)

I want to be honest with you about something before diving into this section. When I first started exploring AI search optimization, I assumed I needed to spend hundreds of dollars per month on premium tools to see any meaningful results. That assumption cost me time because I kept delaying action while waiting until I could “afford the right tools.”

The truth is more encouraging. A well chosen stack of free ai tools for seo optimization can deliver roughly 70% of the results that paid tools provide. I’ve tested this personally across multiple websites. The free stack has real limitations, and I’ll be transparent about those. But for anyone starting out or working with a tight budget, the free options available today are genuinely capable.

The key is knowing which free tools are worth your time and how to combine them effectively. Using five mediocre free tools creates confusion. Using four excellent free tools in the right sequence creates a real optimization workflow.

The Free Starter Stack (Get 70% of Results at $0)

Here’s the combination I recommend to anyone who asks me where to start with AI search optimization on a zero budget. Each tool covers a specific need, and together they create a foundation solid enough to produce real improvements in both traditional rankings and AI search visibility.

Google Search Console

Google Search Console is the non-negotiable starting point for any SEO work, including AI optimization. It’s completely free, it connects directly to Google’s own data about your site, and it surfaces information no third party tool can replicate.

I use Google Search Console for three things in my AI optimization workflow. First, I check which pages Google is successfully crawling and indexing. If Google struggles to access your content, AI crawlers will too. Any indexing issues you find here deserve immediate attention before you optimize anything else.

Second, I review the search queries bringing traffic to each page. This reveals gaps between what people search for and what your pages currently address. Those gaps often correspond exactly to the prompts people use when asking AI engines similar questions.

Third, I monitor Core Web Vitals and page experience signals. Page speed and technical health affect how frequently AI crawlers visit your site. Slow pages get crawled less often, which means your updates take longer to be recognized by AI search engines.

Setting up Google Search Console takes about ten minutes if you haven’t done it already. If your site is already connected, spend thirty minutes reviewing the Coverage report, the Performance report, and the Core Web Vitals section. What you find there will shape every optimization decision that follows.

Semrush Free Tier

Semrush offers a meaningful free tier that provides keyword data, basic competitor analysis, and site auditing for up to ten queries per day. That limit sounds restrictive, but for someone just starting with AI search optimization, ten focused daily queries produce more actionable insight than unlimited access to a tool you don’t know how to use yet.

The features I use most on the Semrush free tier are keyword overview for understanding search volume and difficulty, the site audit for flagging technical issues, and the organic research tool for analyzing what keywords competitor pages rank for.

For AI search optimization specifically, Semrush’s free tier helps you understand the traditional search landscape that AI engines use as a starting point when deciding which sources to cite. Knowing which competitors rank traditionally for your target topics tells you whose content AI engines are most likely already familiar with.

Google Gemini (Formerly Bard)

Here’s something I learned that genuinely surprised me. Neil Patel shared a specific keyword research prompt that works extremely well for identifying additional optimization opportunities, and it works on the free version of Google Gemini without needing any paid subscription.

The prompt is straightforward. You ask Gemini to analyze the content on a specific page and recommend other keywords you should target that are related to the ones you’re already pursuing but not currently ranking for. Then you follow up with a second prompt asking Gemini to categorize those keyword suggestions by informational, navigational, and transactional intent.

The reason for using two sequential prompts instead of one combined prompt matters. Giving the AI time to complete one task before moving to the next produces more thoughtful and complete responses. I’ve tested this and the two prompt approach consistently generates better keyword lists than asking everything at once.

This technique turns Gemini into a free keyword research tool that considers your actual content rather than just general topic data. The resulting keyword suggestions are genuinely tailored to your page, which makes them more relevant and actionable than generic keyword tool outputs.

Beyond keyword research, I use Gemini regularly to test how AI engines respond to prompts related to my content topics. When I ask Gemini a question that my articles answer and my content doesn’t appear in the response, that tells me something specific needs to change about how I’ve structured or positioned that content.

AlsoAsked

AlsoAsked visualizes the “People Also Ask” questions that appear in Google search results and maps the relationships between related questions. The free tier provides three searches per day, which is enough to research the question landscape around your most important topics.

For AI search optimization, AlsoAsked is valuable because the questions it surfaces closely resemble the prompts people type into AI assistants. When Google shows “People Also Ask” questions, those questions reflect real patterns in how people think and communicate about a topic. Those same thought patterns show up in AI search queries.

I use AlsoAsked to find questions my content should answer but currently doesn’t. Each unanswered question represents both an FAQ section opportunity and a potential AI citation if I answer it clearly and completely.

The visual map that AlsoAsked creates also helps me understand the scope of a topic. Seeing how questions branch from a central topic into related subtopics reveals content gaps that neither keyword tools nor competitor analysis always surfaces.

HubSpot Blog Topic Generator

HubSpot’s free Blog Topic Generator helps when you need content ideas beyond what keyword tools suggest. You enter a general subject, and it returns specific article topic variations you might not have considered.

I use it specifically for finding angles that align with conversational AI queries. The topic suggestions often have a natural, question answering quality that maps well onto how people phrase prompts in ChatGPT or Perplexity.

It’s also useful for breaking out of optimization tunnel vision. After spending time focused on specific keywords, I sometimes lose sight of adjacent topics my audience cares about. Running a few subjects through HubSpot’s generator quickly surfaces ideas that expand my content planning in productive directions.

When to Upgrade to Paid (The ROI Tipping Point)

I promised honesty about the limitations of free tools, and here it is. The free stack I’ve described works well up to a certain scale. Beyond that scale, paid tools stop being a luxury and start being a practical necessity.

Let me explain where the real boundaries are, so you can make a clear eyed decision about when upgrading makes sense for your situation.

The citation tracking gap

The most significant limitation of the free stack is that it can’t directly monitor your AI search citations. You can test manually by typing prompts into Gemini or Perplexity and seeing whether your content appears. But that’s not systematic tracking.

If you’re actively working on AI search optimization, not knowing how often you get cited makes it very difficult to measure whether your efforts are working. You might be making all the right changes and getting significantly more citations without knowing it. Or you might be working hard with no improvement and not realizing it until months have passed.

Paid tools like Writesonic’s GEO tracker, Profound, or Rankscale.ai solve this problem by monitoring citations systematically across multiple AI platforms. Once you’re publishing ten or more articles per month and actively working on AI visibility, the time cost of manual citation checking almost certainly exceeds the monthly cost of a tracking tool.

The query limit problem

Both Semrush’s free tier and AlsoAsked’s free tier impose daily query limits that become genuinely restrictive as you scale content production. If you’re researching keywords for twenty articles per month, ten free Semrush queries per day creates a constant bottleneck that slows down your planning.

The ROI calculation is straightforward at that point. If your time is worth $50 per hour and query limits cause you to spend an extra five hours per month working around them, you’re losing $250 in productive time to avoid a $130 tool subscription. That math doesn’t favor the free option anymore.

The automation threshold

Free tools require manual execution of every task. You manually check keywords. You manually test prompts. You manually audit pages. You manually review citations. That’s completely manageable when you’re managing one or two websites at a small scale.

As soon as you’re managing multiple sites, producing content regularly, or trying to track optimization progress across a meaningful number of pages, manual execution becomes the bottleneck that limits everything else. This is where budget optimization thinking shifts from “how do I avoid paying” to “what’s the minimum I need to spend to remove the bottleneck.”

Paid automation tools like Search Atlas Auto or workflow systems built in N8N handle recurring technical optimization tasks continuously. The scaling strategy changes from “do more work” to “build systems that do the work.” That shift is only possible with tools that go beyond what free tiers provide.

My honest recommendation

Start with the free stack and use it seriously for at least sixty days. Document your starting point by taking screenshots of your AI visibility, your traditional rankings, and your content structure. Then implement optimizations using the free tools and track your progress manually.

After sixty days, assess what’s limiting you most. If it’s citation tracking, invest first in a monitoring tool. If it’s research speed, invest in expanded Semrush access. If it’s content production speed, consider a content optimization or automation tool.